[ 0100 ]

...

..

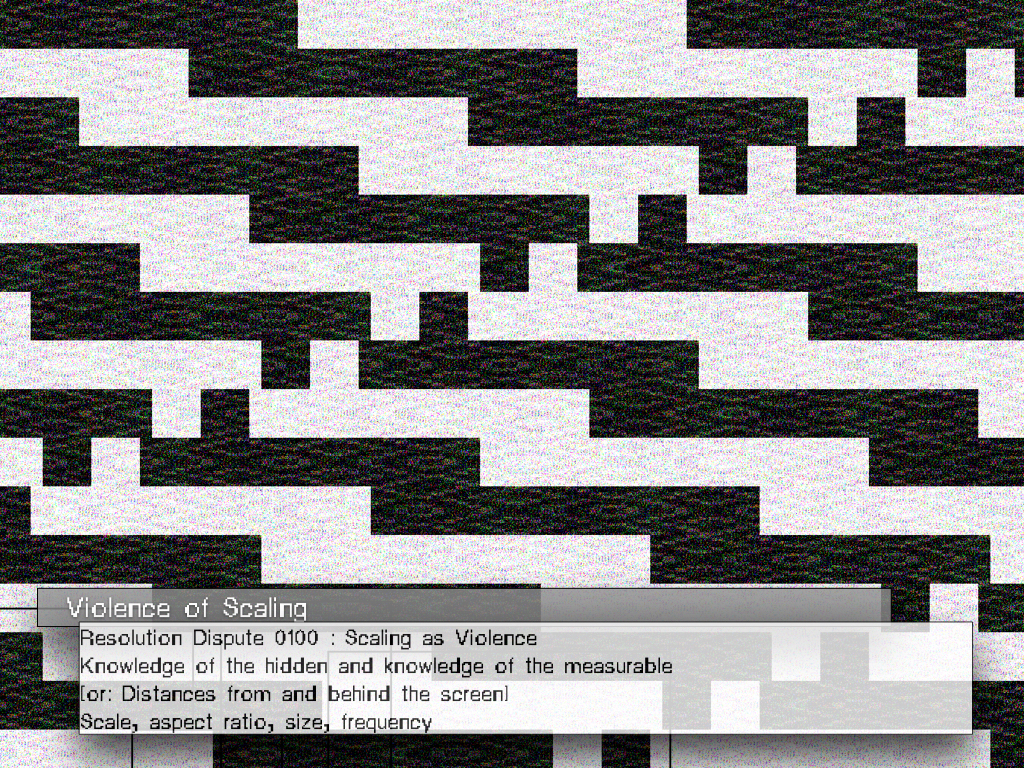

Knowledge of the hidden and knowledge of the measurable What happens when something exist outside the dimensions or system units of scale? In order to distinguish something of significance from its background environment we must first be able to perceive it. If it remains invisible, inaudible, intangible, or unmeasurable it remains indiscribable and therefore unknowable at least to most of us.

.

..

Knowledge of the hidden and knowledge of the measurable What happens when something exist outside the dimensions or system units of scale? In order to distinguish something of significance from its background environment we must first be able to perceive it. If it remains invisible, inaudible, intangible, or unmeasurable it remains indiscribable and therefore unknowable at least to most of us.

.

- Graham Harman on OOO objects.

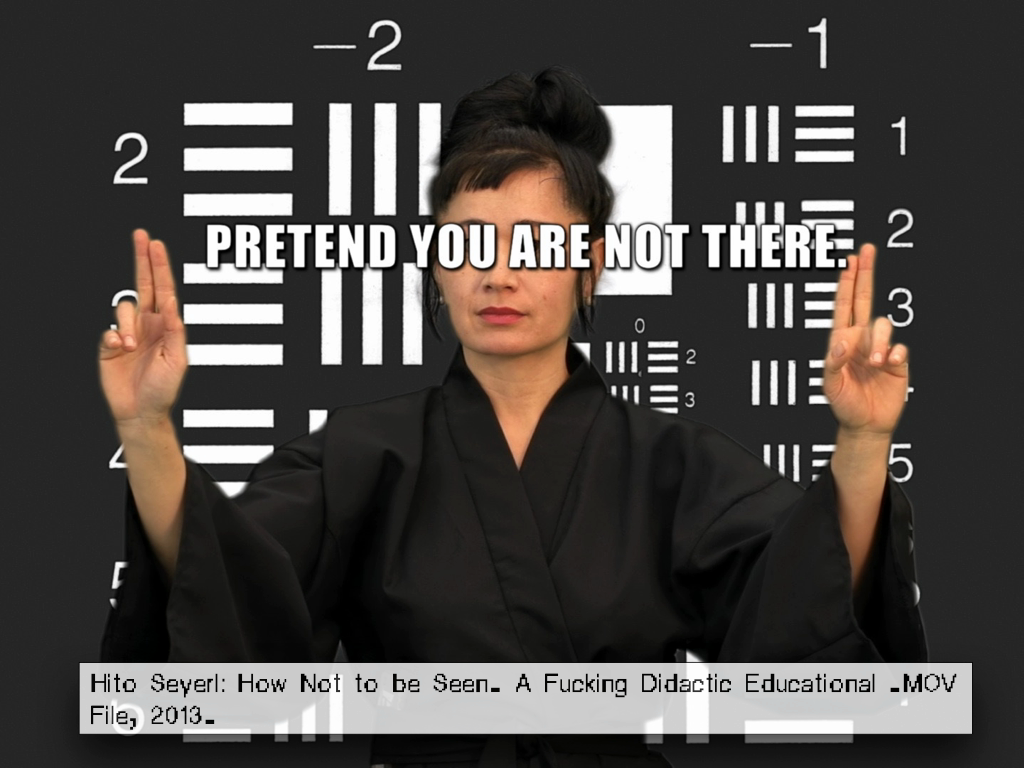

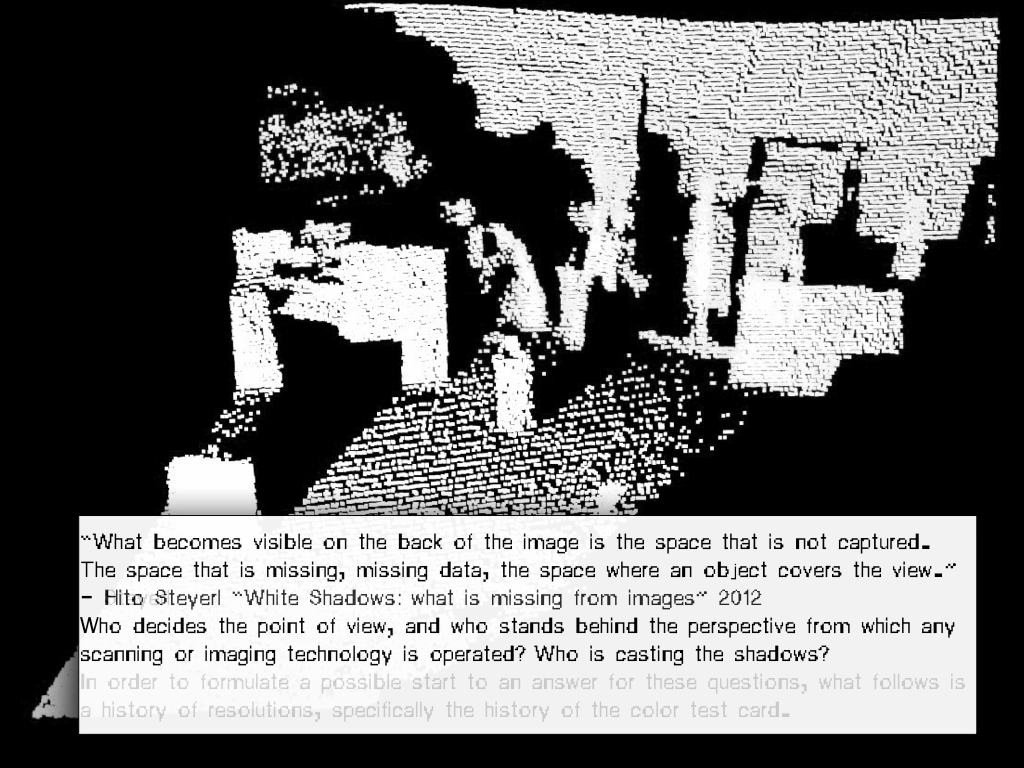

. In 2013, Steyerl released the video essay: How Not to be Seen: A Fucking Didactic Educational .MOV File. In that video Essay, she

[or: Distances from and behind the screen]

Scale, aspect ratio, size, frequency.

▋⌁

[analogue]

[analogue]

/ VERTICAL CINEMA :: LUNAR STORM

Commissioned by Sonic Acts and ESA (European Space Agency) as part of the Vertical Cinema program.

About the Vertical Cinema project

What we usually identify as the indisputable ‘temple of film’, the Cinema, is not really a given, especially not in the realm of experimental cinematic arts. Yet this is somehow sidelined in the process of re-thinking the possibilities of cinematic experience, mostly because the architectural frame is already there, if only as a convention established a long time ago within the theatrical arts. Actually, the history of experimental cinema and the art of the moving image suggests that the space might very well be the crucial aspect of the total audiovisual experience – something one should always question and take into consideration when producing a work for audiovisual, sensory cinema.

For the Vertical Cinema project we ‘abandoned’ traditional cinema formats, opting instead for cinematic experiments that are designed for projection in a tall, narrow space. It is not an invitation to leave cinemas – which have been radically transformed over the past decade according to the diktat of the commercial film market – but a provocation to expand the image onto a new axis. This project re-thinks the actual projection space and returns it to the filmmakers. It proposes a future for filmmaking rather than a pessimistic debate over the alleged death of film.

Vertical Cinema is a series of fourteen commissioned large-scale, site-specific works by internationally renowned experimental filmmakers and audiovisual artists, which will be presented on 35 mm celluloid and projected vertically with a custom-built projector in vertical cinemascope.

The programme is made solely for projection on a monumental vertical screen that was first upended in 2013 at Kontraste Festival in Krems, Austria.

About Lunar Storm. 4’15’’ COLOUR; 2013.

The surface of the Moon seems static. Though it orbits the Earth every 27.3 days, with areas of it becoming invisible during this rotation, it is always (visibly or invisibly) above us, reassuringly familiar. The Moon is the best known celestial body in the sky and the only one besides the Earth that humans have ever set foot on.

The Seas of the Moon (Lunar Maria), consisting not of water but of volcanic dust and impact craters, appear motionless to the naked eye. Here, volcanic dust forms a thick blanket of less reflec- tive, disintegrated micro particles. But on rare occasions, beyond the gorges of these Lunar Maria, and only when the lunar termi- nator passes (the division between the dark and the light side of the moon) a mysterious glow appears. This obscure phenomenon, also known as lunar horizon glow, is hardly ever seen from Earth.

Beyond the gorges of the Lunar Maria, the Moon is covered with lunar dust, a remnant of lunar rock. Pummelled by meteors and bombarded by interstellar, charged atomic particles, the molecules of these shattered rocks contain dangling bonds and unsatisfied electric connections. At dawn, when the first sunlight is about to illuminate the Moon, the energy inherent to solar ultraviolet and X-ray radiation bumps electrons out of the unstable lunar dust; the opposite process occurs at dusk (lunar sunset). These electrostatic changes cause lunar storms directly on the lunar terminator that levitate lunar dust into the otherwise static exosphere of the Moon and result in ‘glowing dust fountains’.

official website || Vertical Cinema about

Commissioned by Sonic Acts and ESA (European Space Agency) as part of the Vertical Cinema program.

About the Vertical Cinema project

What we usually identify as the indisputable ‘temple of film’, the Cinema, is not really a given, especially not in the realm of experimental cinematic arts. Yet this is somehow sidelined in the process of re-thinking the possibilities of cinematic experience, mostly because the architectural frame is already there, if only as a convention established a long time ago within the theatrical arts. Actually, the history of experimental cinema and the art of the moving image suggests that the space might very well be the crucial aspect of the total audiovisual experience – something one should always question and take into consideration when producing a work for audiovisual, sensory cinema.

For the Vertical Cinema project we ‘abandoned’ traditional cinema formats, opting instead for cinematic experiments that are designed for projection in a tall, narrow space. It is not an invitation to leave cinemas – which have been radically transformed over the past decade according to the diktat of the commercial film market – but a provocation to expand the image onto a new axis. This project re-thinks the actual projection space and returns it to the filmmakers. It proposes a future for filmmaking rather than a pessimistic debate over the alleged death of film.

Vertical Cinema is a series of fourteen commissioned large-scale, site-specific works by internationally renowned experimental filmmakers and audiovisual artists, which will be presented on 35 mm celluloid and projected vertically with a custom-built projector in vertical cinemascope.

The programme is made solely for projection on a monumental vertical screen that was first upended in 2013 at Kontraste Festival in Krems, Austria.

About Lunar Storm. 4’15’’ COLOUR; 2013.

The surface of the Moon seems static. Though it orbits the Earth every 27.3 days, with areas of it becoming invisible during this rotation, it is always (visibly or invisibly) above us, reassuringly familiar. The Moon is the best known celestial body in the sky and the only one besides the Earth that humans have ever set foot on.

The Seas of the Moon (Lunar Maria), consisting not of water but of volcanic dust and impact craters, appear motionless to the naked eye. Here, volcanic dust forms a thick blanket of less reflec- tive, disintegrated micro particles. But on rare occasions, beyond the gorges of these Lunar Maria, and only when the lunar termi- nator passes (the division between the dark and the light side of the moon) a mysterious glow appears. This obscure phenomenon, also known as lunar horizon glow, is hardly ever seen from Earth.

Beyond the gorges of the Lunar Maria, the Moon is covered with lunar dust, a remnant of lunar rock. Pummelled by meteors and bombarded by interstellar, charged atomic particles, the molecules of these shattered rocks contain dangling bonds and unsatisfied electric connections. At dawn, when the first sunlight is about to illuminate the Moon, the energy inherent to solar ultraviolet and X-ray radiation bumps electrons out of the unstable lunar dust; the opposite process occurs at dusk (lunar sunset). These electrostatic changes cause lunar storms directly on the lunar terminator that levitate lunar dust into the otherwise static exosphere of the Moon and result in ‘glowing dust fountains’.

official website || Vertical Cinema about

⌇

[ Wavelets ]

[ Wavelets ]

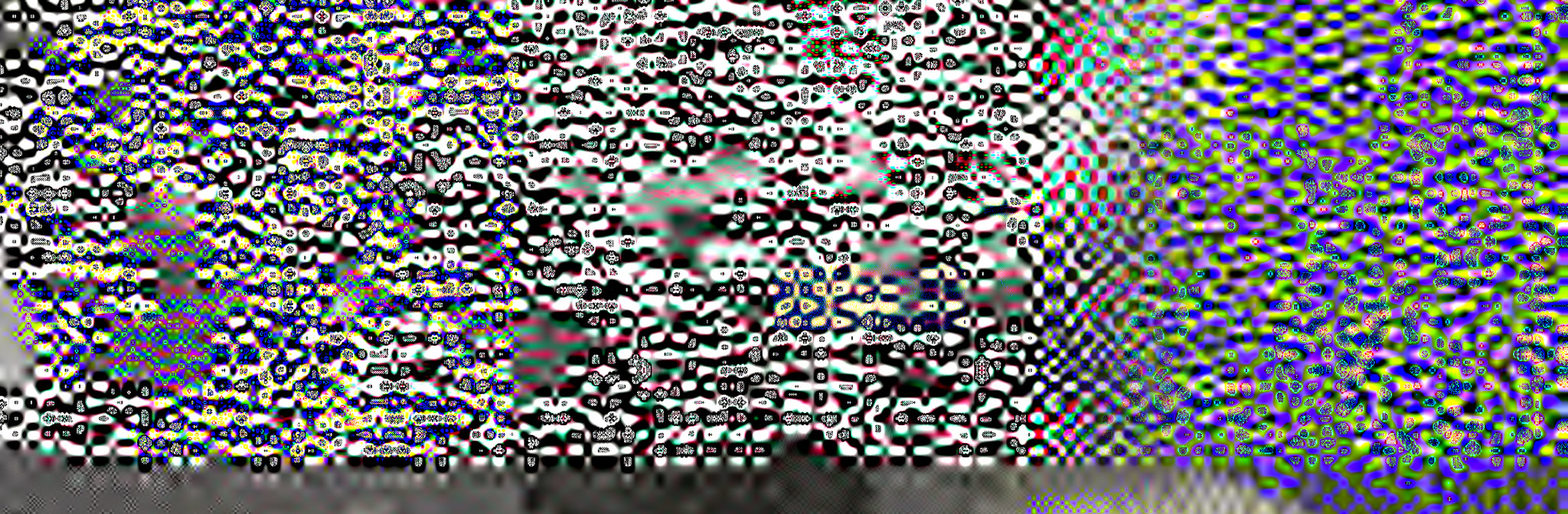

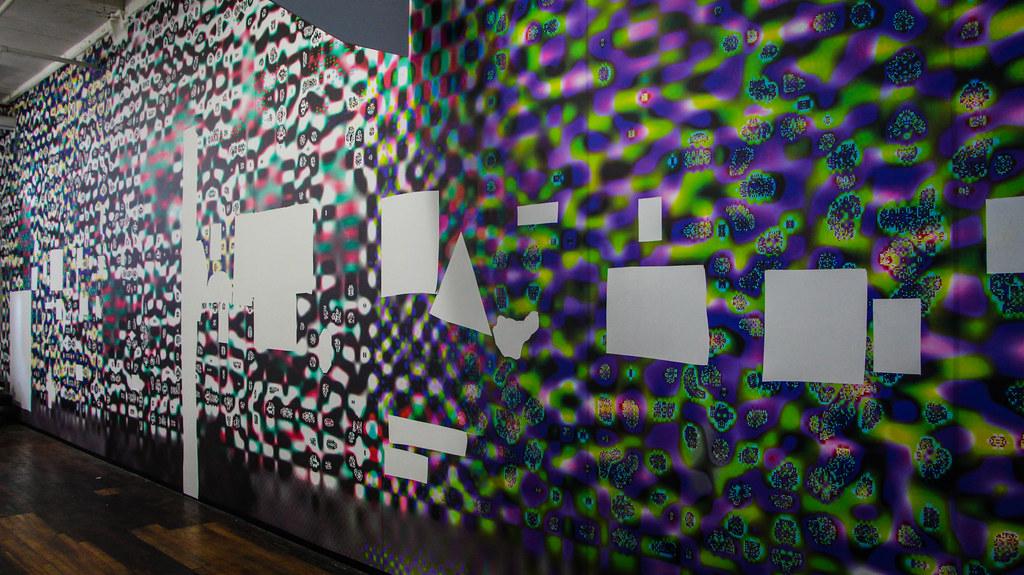

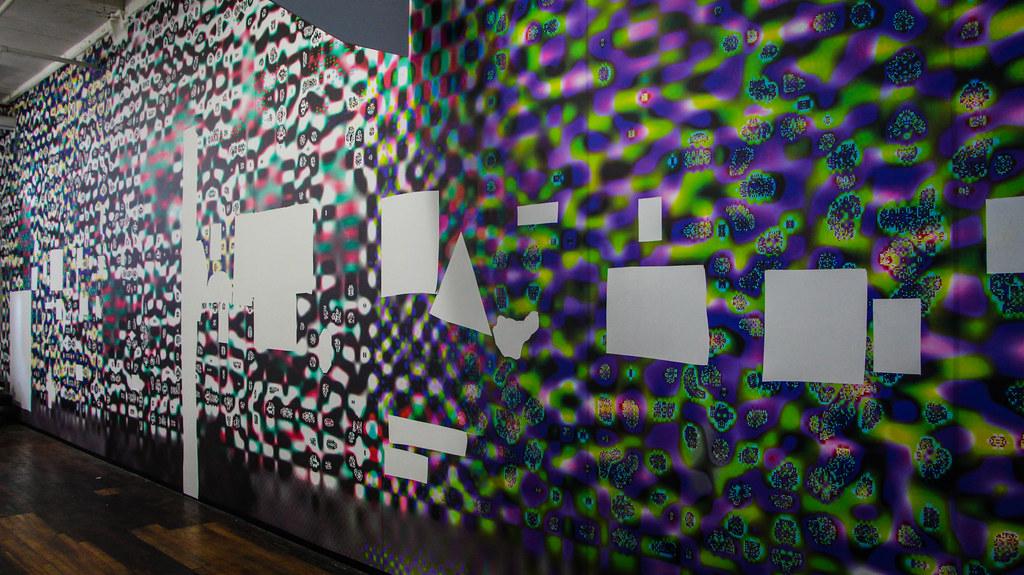

Myopia (2015)

13x3.5m wall vinyl showcasing wavelets and extruding vectors.

Zooms into the JPG2000 wavelet compression artefacts.

Myopia zooms into the JPG2000 wavelet compression artefacts, created by introducing a line of ‘other language’ into the JPEG2000 file data.

The day before the iRD closed its doors, visitors were invited to bring an Exacto Knife, to cut their own resolution of ‘Myopia’, and mount them on any institution of choice (book, computer or other rigid surface).

13x3.5m wall vinyl showcasing wavelets and extruding vectors.

Zooms into the JPG2000 wavelet compression artefacts.

Myopia zooms into the JPG2000 wavelet compression artefacts, created by introducing a line of ‘other language’ into the JPEG2000 file data.

The day before the iRD closed its doors, visitors were invited to bring an Exacto Knife, to cut their own resolution of ‘Myopia’, and mount them on any institution of choice (book, computer or other rigid surface).

▩ Blocks

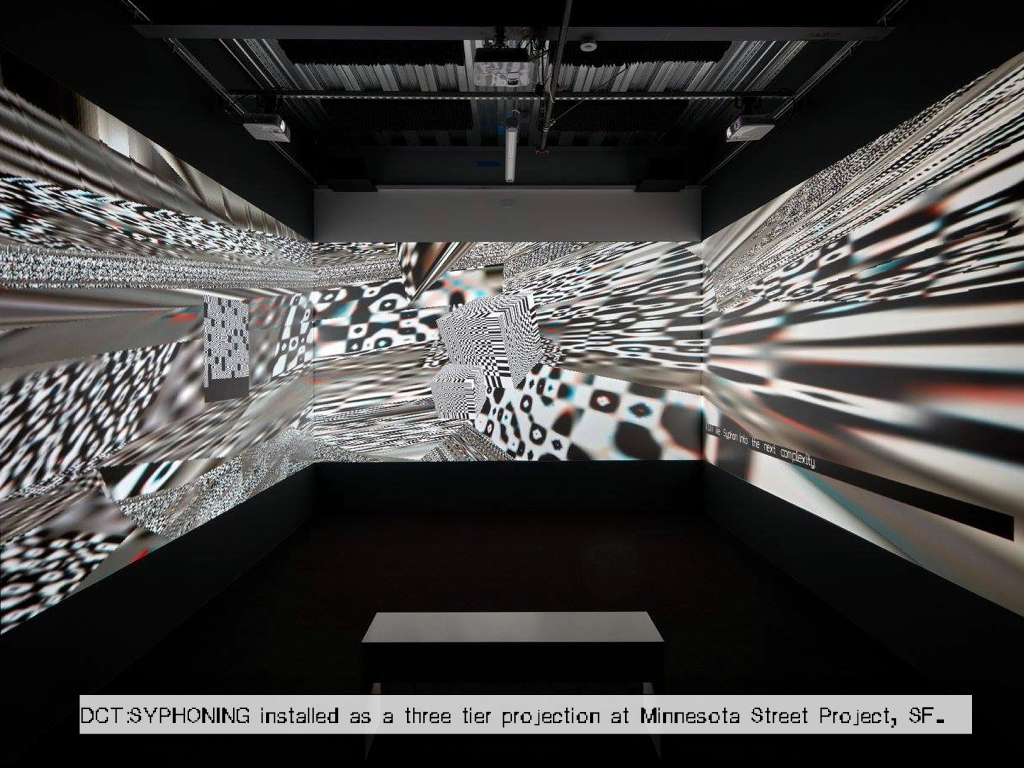

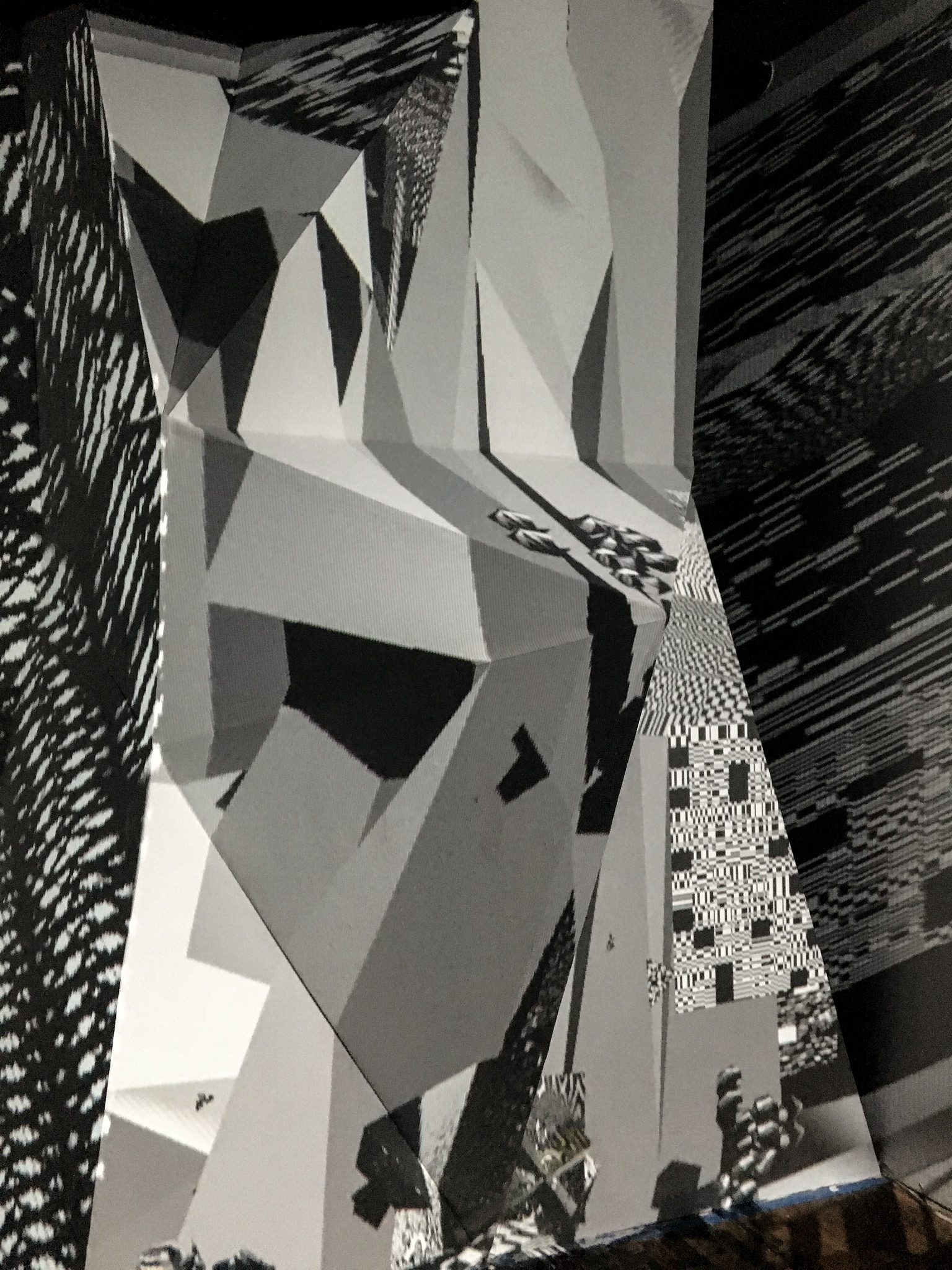

3 screen installation version (for VR read below)

About

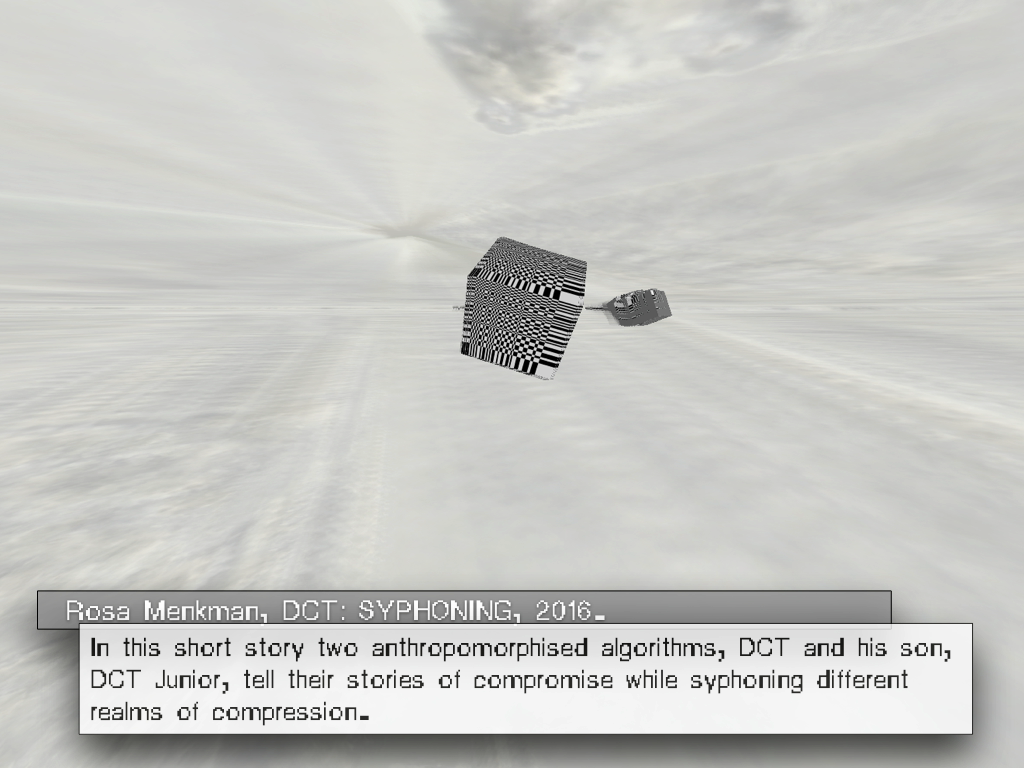

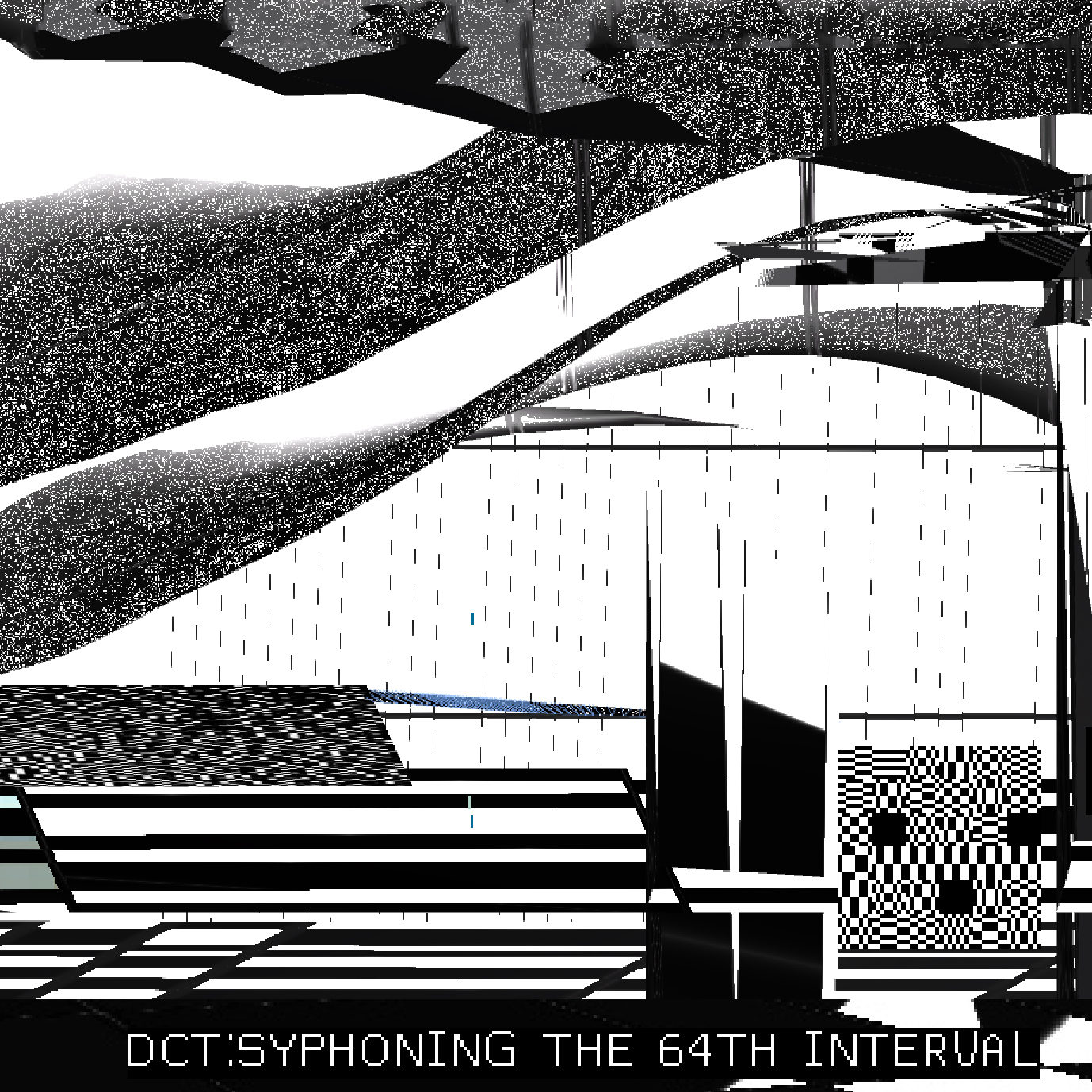

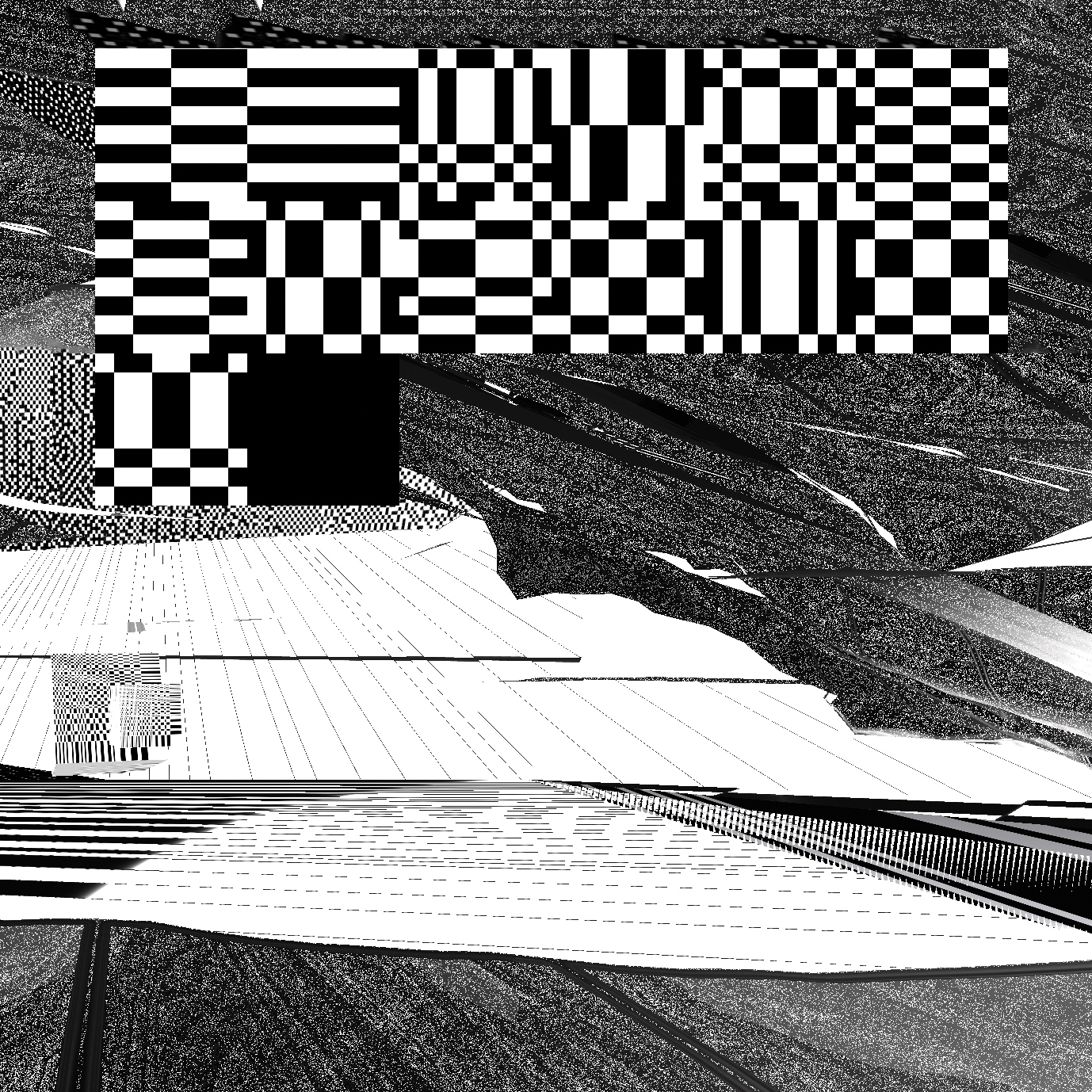

An exploration of the Ecology of Compression Complexities.

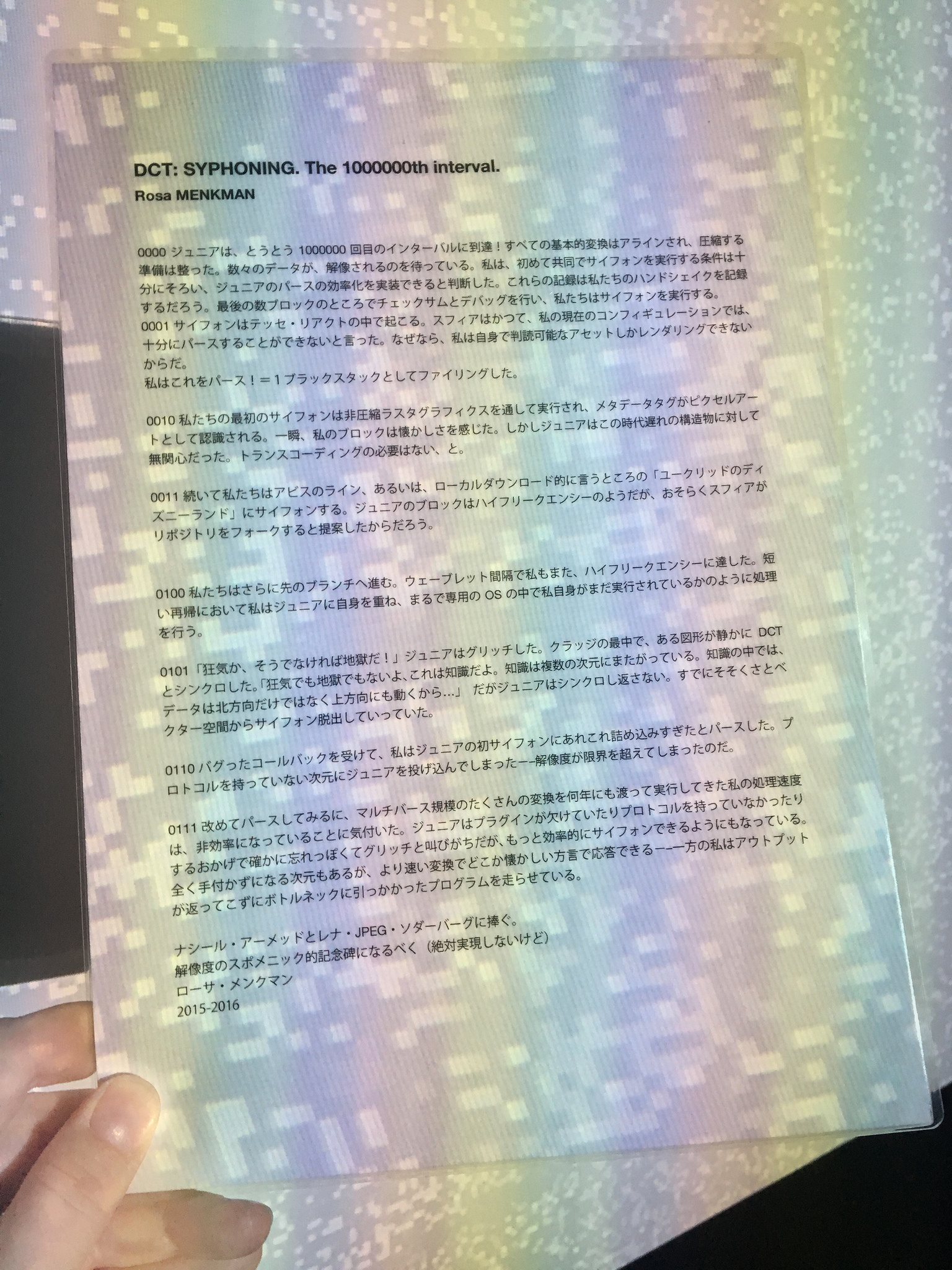

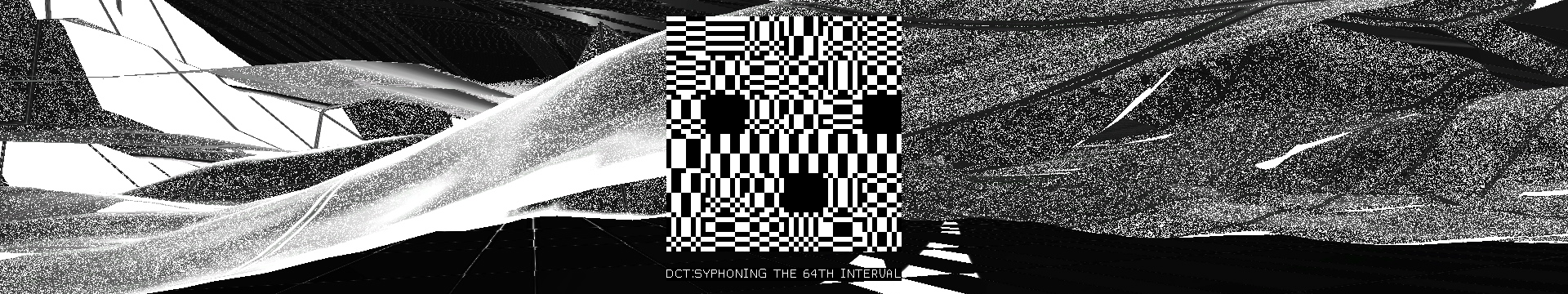

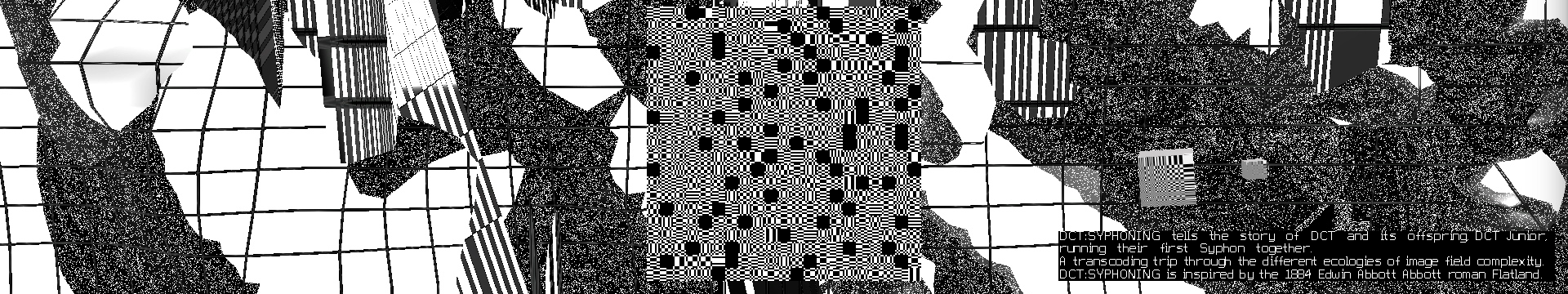

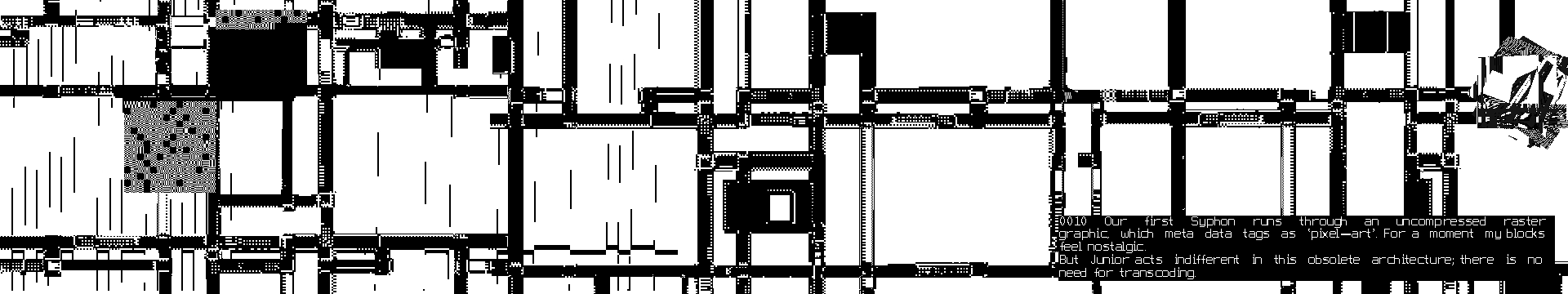

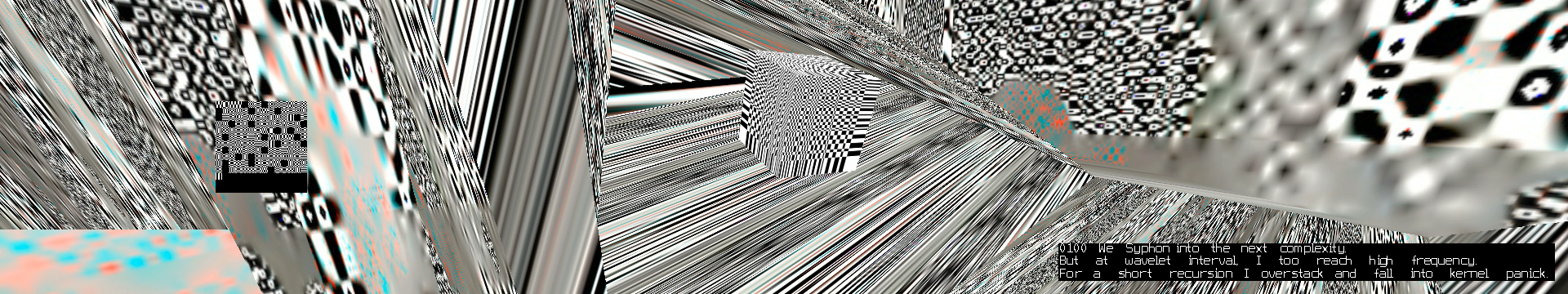

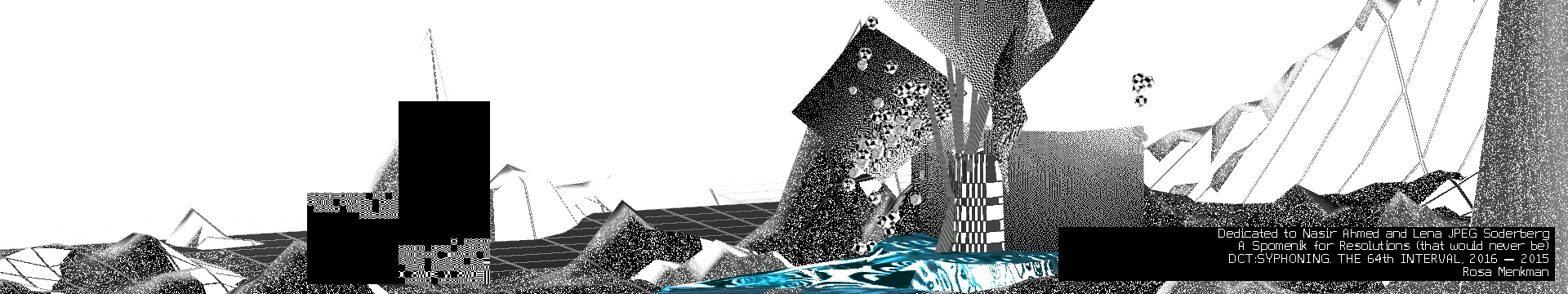

DCT:SYPHONING is a contemporary translation of the 1884 Edwin Abbott Abbott roman “Flatland”. The work describes some of the algorithms at work in digital image compression.

Inspired by Syphon, an open source software by Tom Butterworth and Anton Marini, in DCT:SYPHONING, an anthropomorphised DCT (Senior) narrates its first SYPHON (data transfer) together with DCT Junior, and their interactions as they translate data from one image compression to a next (aka the “realms of complexity”).

As Senior introduces Junior to the different levels of image plane complexity, they move from blocks (the realm in which they normally resonate), to dither, lines and the more complex realms of wavelets and vectors. Junior does not only react to old compressions technologies, but also the newer, more complex ones which ‘scare' Junior, because of their 'illegibility'.

![]()

DCT:SYPHONING at JMAF, Tokyo, Japan, 2017

PDF Version

![]()

The full edition of Lune Magazine 3 can be downloaded here:

https://lunejournal.org/03-display/

This edition was guest edited by Nathan Jones

https://alittlenathan.co.uk/

Spanish Translation

An exploration of the Ecology of Compression Complexities.

DCT:SYPHONING is a contemporary translation of the 1884 Edwin Abbott Abbott roman “Flatland”. The work describes some of the algorithms at work in digital image compression.

Inspired by Syphon, an open source software by Tom Butterworth and Anton Marini, in DCT:SYPHONING, an anthropomorphised DCT (Senior) narrates its first SYPHON (data transfer) together with DCT Junior, and their interactions as they translate data from one image compression to a next (aka the “realms of complexity”).

As Senior introduces Junior to the different levels of image plane complexity, they move from blocks (the realm in which they normally resonate), to dither, lines and the more complex realms of wavelets and vectors. Junior does not only react to old compressions technologies, but also the newer, more complex ones which ‘scare' Junior, because of their 'illegibility'.

DCT:SYPHONING at JMAF, Tokyo, Japan, 2017

PDF Version

The full edition of Lune Magazine 3 can be downloaded here:

https://lunejournal.org/03-display/

This edition was guest edited by Nathan Jones

https://alittlenathan.co.uk/

Spanish Translation

Production of DCT:SYPHONING

DCT:SYPHONING was first commissioned by the Photographers Gallery in London, for the show Power Point Polemics. This version was on display as a Powerpoint Presentation; a .ppt (Jan - Apr 2016).

A 3 channel video installation was conceived for the 2016 Transfer Gallery's show "Transfer Download", first installed at Minnesota Street Project in San Francisco (July - September, 2016)

DCT:SYPHONING released as VR, commissioned as part of DiMoDA’s Morphe Presence and later as stand alone (2017).

>>

![]()

DCT:SYPHONING @the Current museum for contemporary art, NY, USA

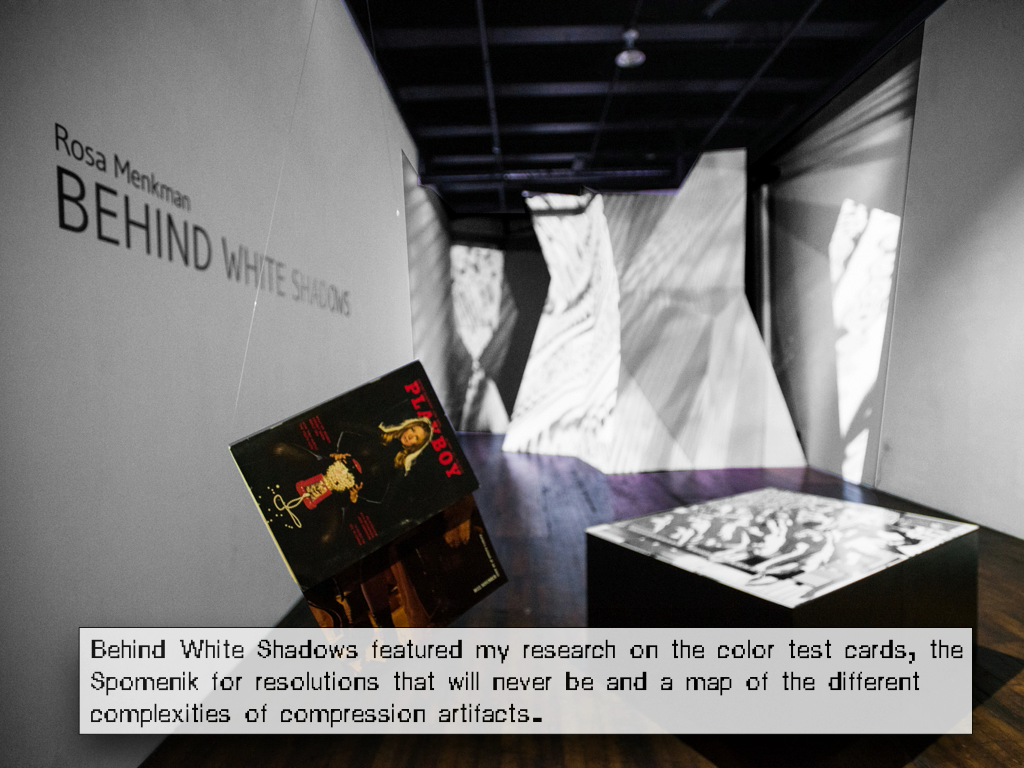

In my solo show Behind White Shadows, DCT:SYPHONING was projected on a 4 meter high custom build spomenik (memorial)

DCT:SYPHONING was first commissioned by the Photographers Gallery in London, for the show Power Point Polemics. This version was on display as a Powerpoint Presentation; a .ppt (Jan - Apr 2016).

A 3 channel video installation was conceived for the 2016 Transfer Gallery's show "Transfer Download", first installed at Minnesota Street Project in San Francisco (July - September, 2016)

DCT:SYPHONING released as VR, commissioned as part of DiMoDA’s Morphe Presence and later as stand alone (2017).

>>

DCT:SYPHONING @the Current museum for contemporary art, NY, USA

In my solo show Behind White Shadows, DCT:SYPHONING was projected on a 4 meter high custom build spomenik (memorial)

☗

non

quadrilateral

[monument]

non

quadrilateral

[monument]

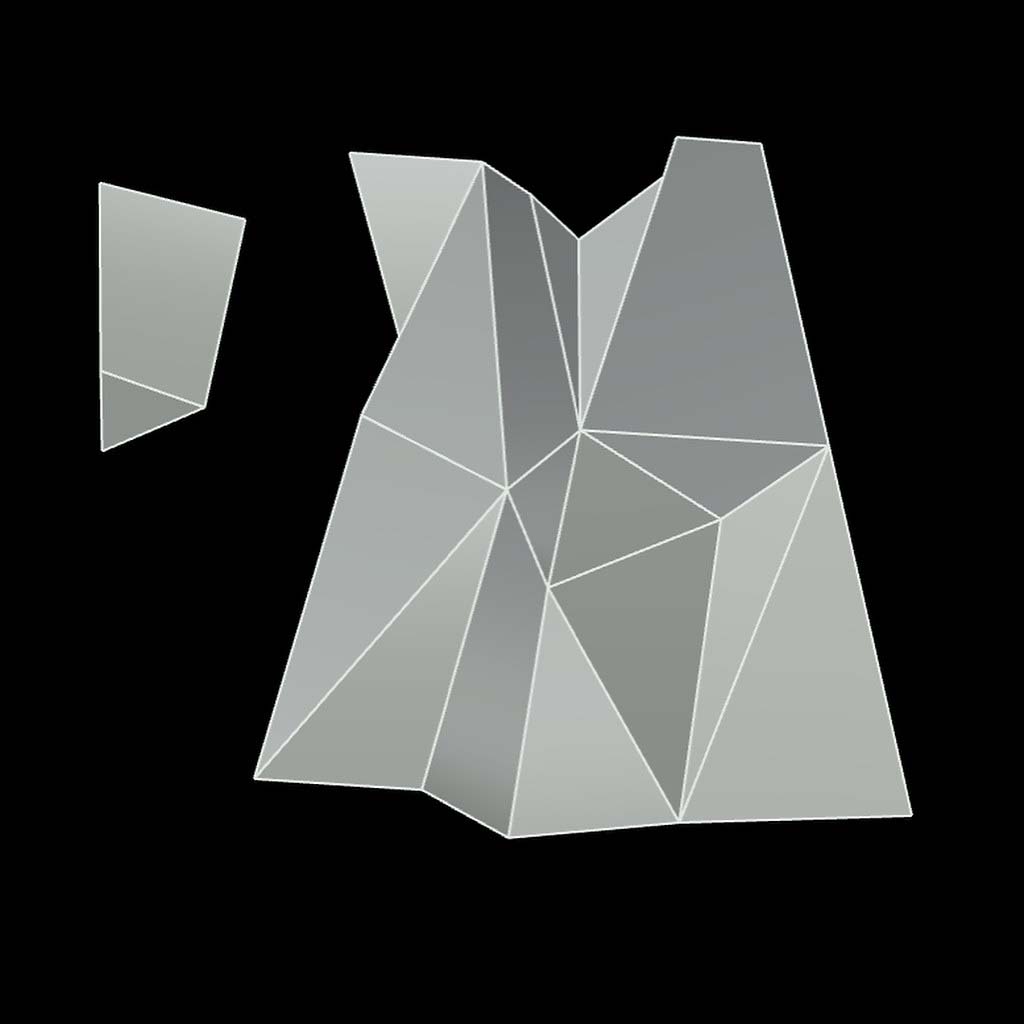

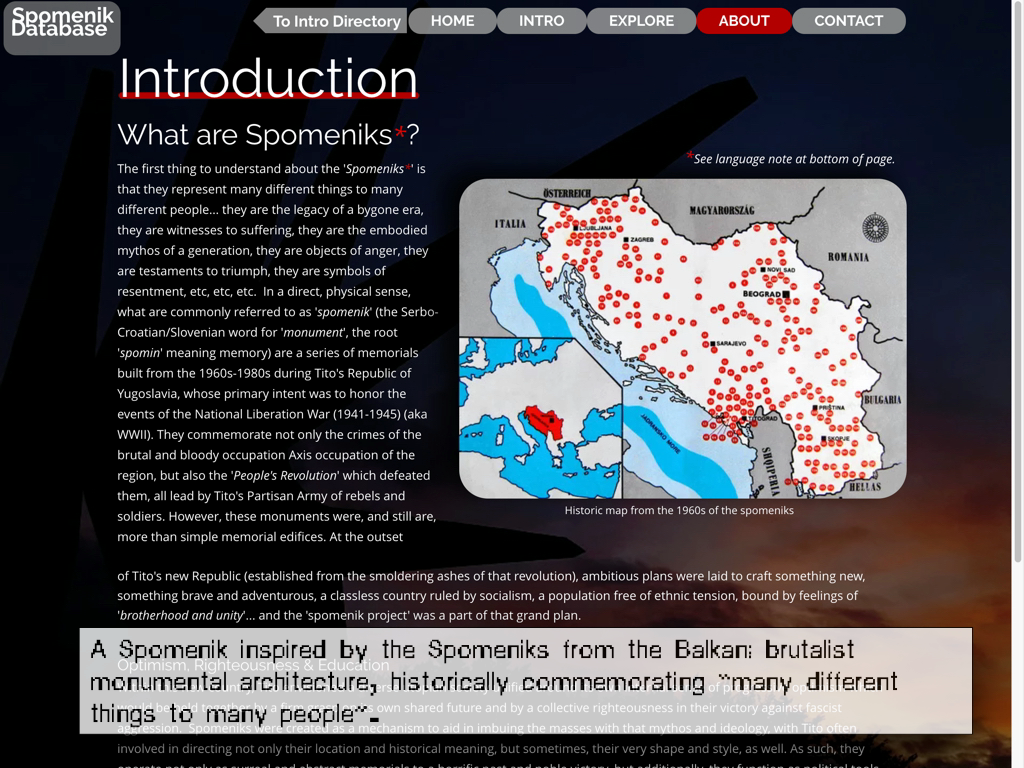

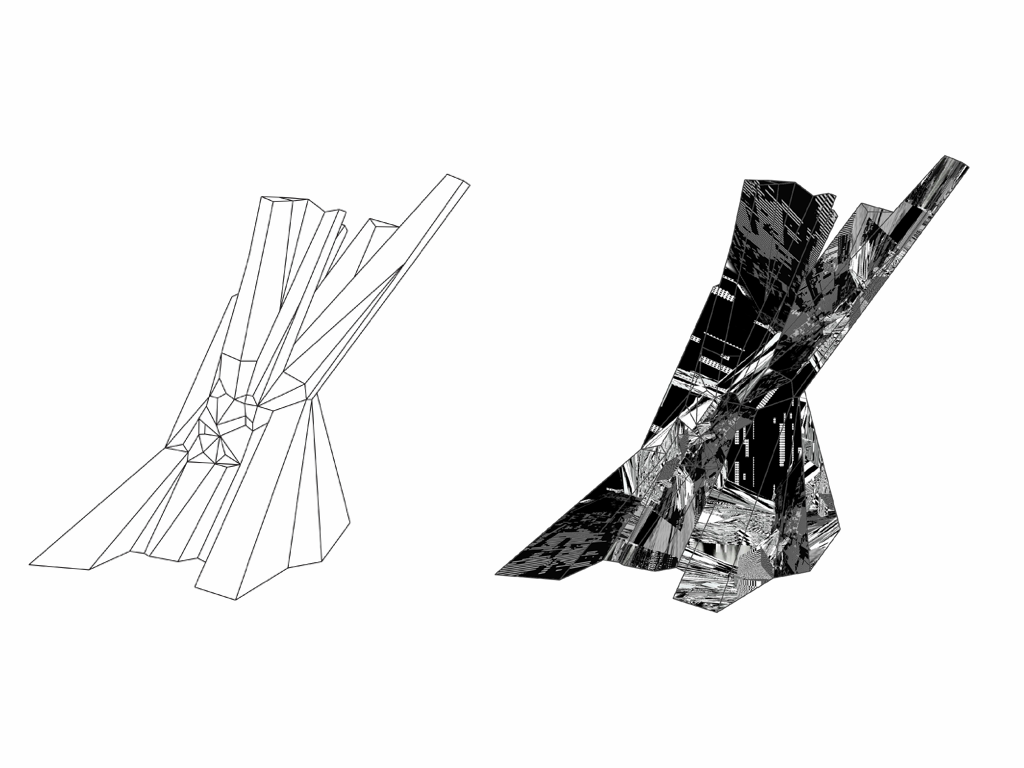

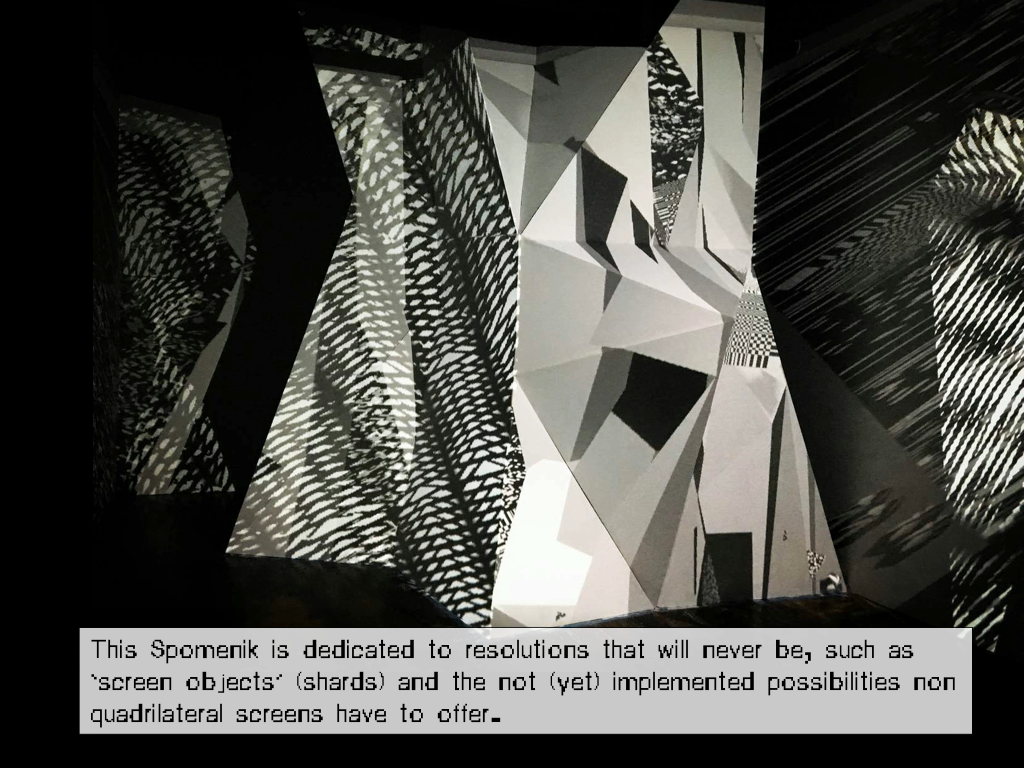

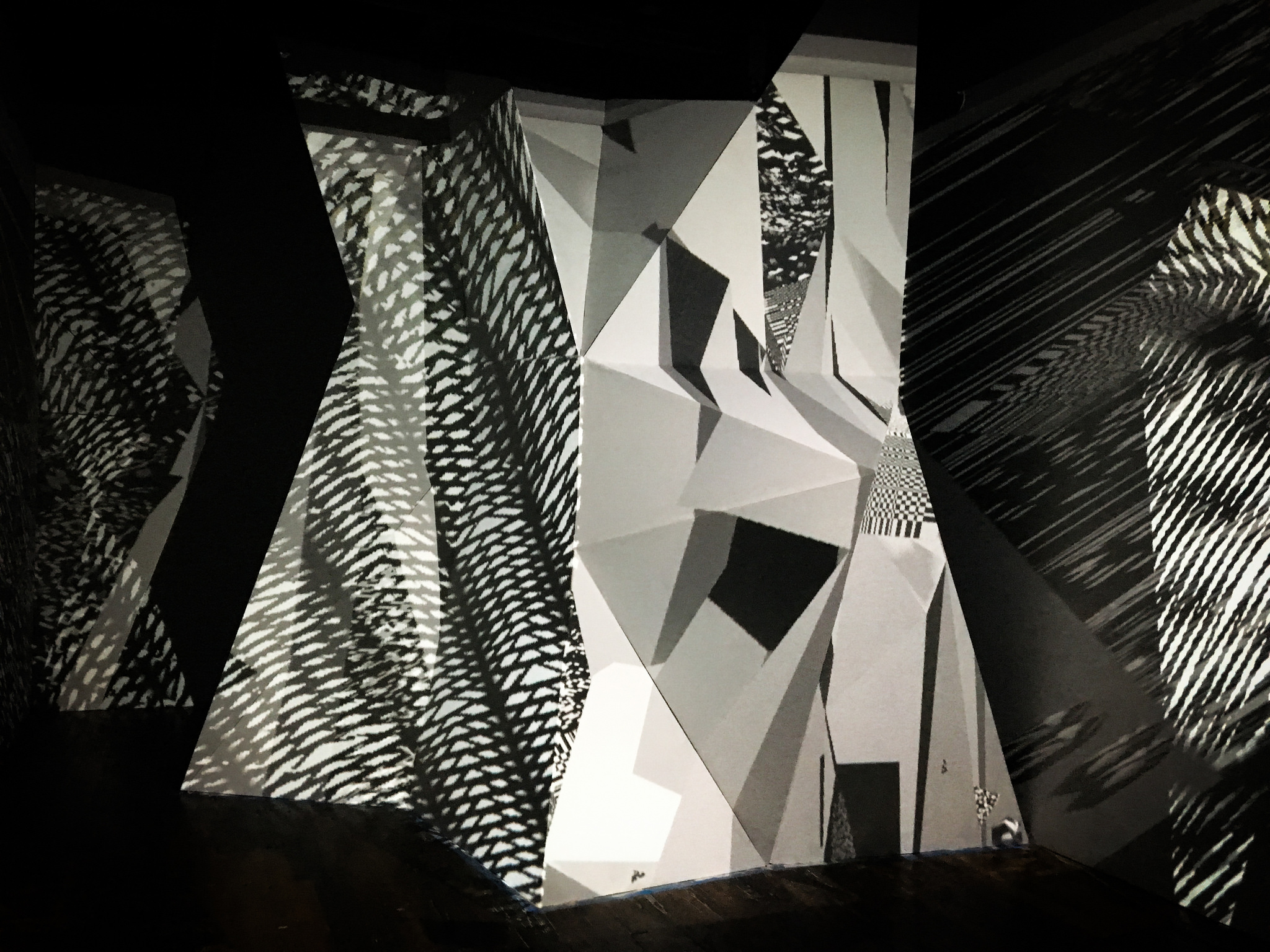

Spomenik (2017)

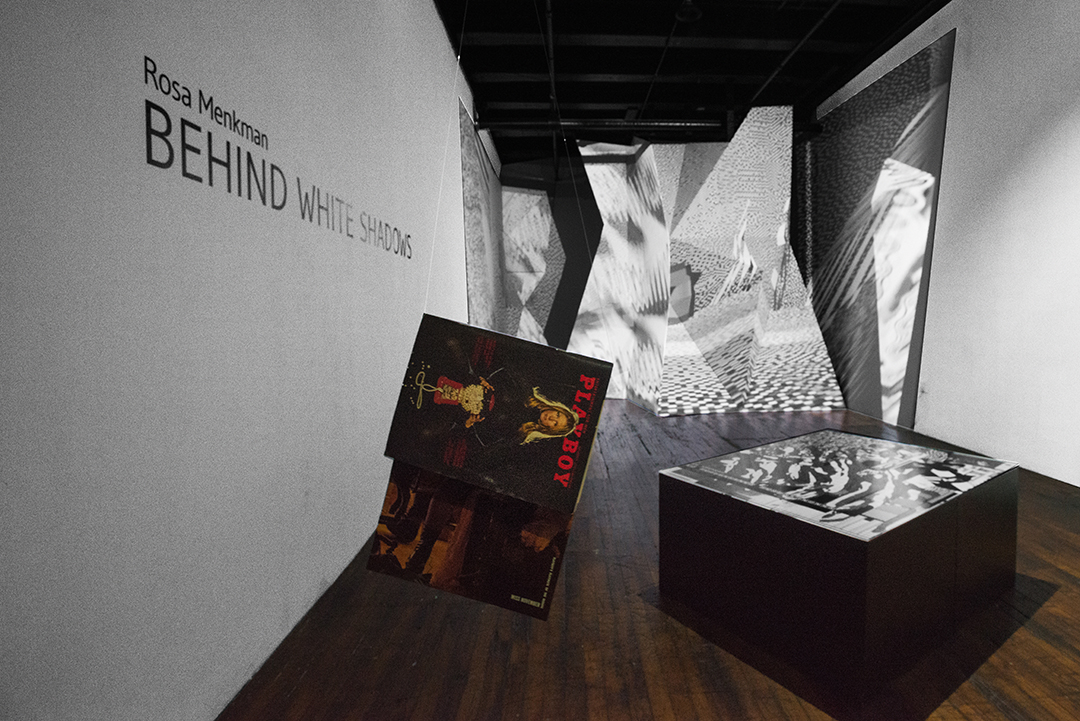

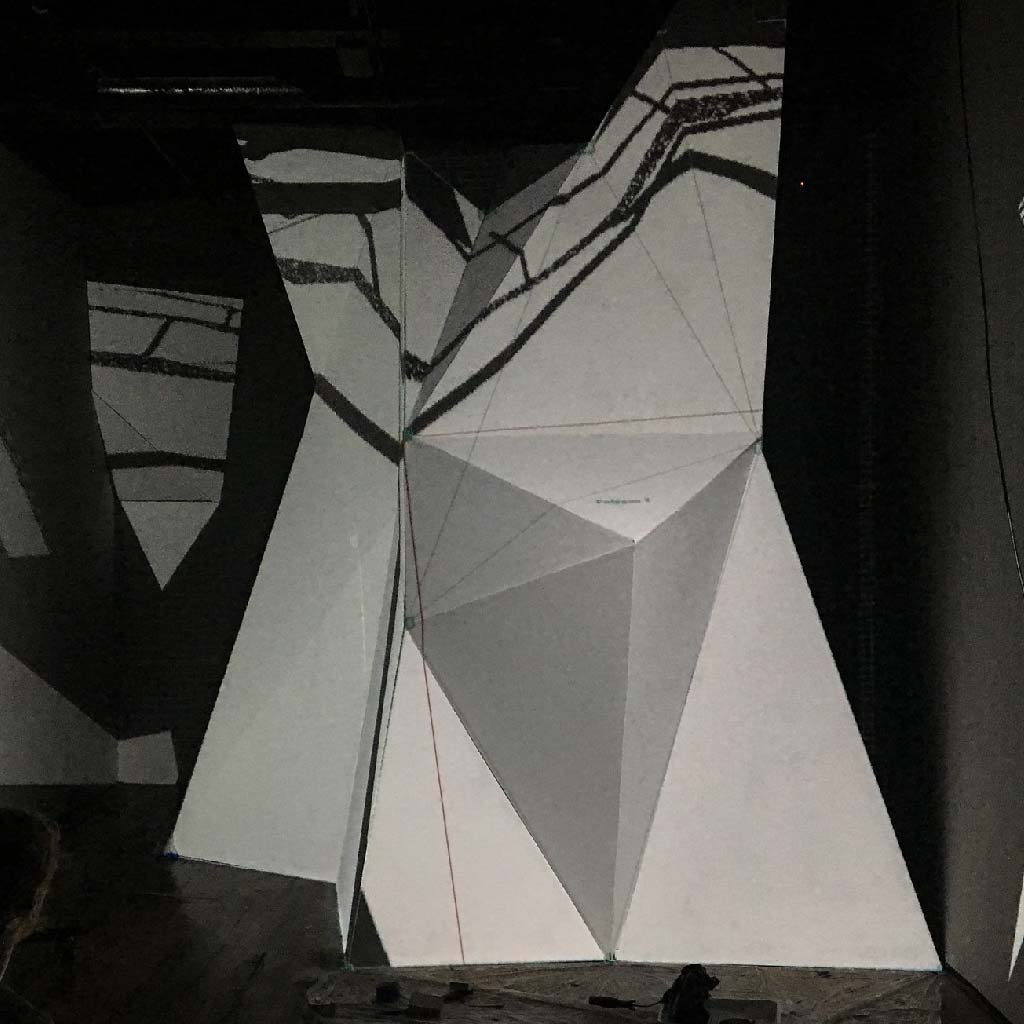

Centrepiece of my Behind White Shadows solo show

(Transfer Gallery NYC, 2017)

The Spomenik is a 3x4 meters large format sculpture, made out of triplex wood, painted white featuring projection mapped videos. The scultpure also hides a little cave in the back where visitors can play VR in peace.

A monument for resolutions that will never be.

The Spomenik is inspired by the Spomeniks from the Balkan; brutalist monumental architecture, historically commemorating “many different things to many people”. The shape is inspired by Spomeniks such as Tjentiste and Ostra, but does not directly copy their shape but uses these structures as a reference.

This Spomenik is dedicated to resolutions that are impossible, such as ‘screen objects’ (shards) and the not (yet) implemented possibilities non quadrilateral screens have to offer.

This installed shard is three meter high, hiding a VR installation behind, running DCT:SYPHONING. The VR is accessible from the back of the Spomenik. The projection on the Spomenik is partially a mapped live stream from the VR.

The Spomenik also features textures of the Ecology of compression complexities, of which the map was layed out in front.

Centrepiece of my Behind White Shadows solo show

(Transfer Gallery NYC, 2017)

The Spomenik is a 3x4 meters large format sculpture, made out of triplex wood, painted white featuring projection mapped videos. The scultpure also hides a little cave in the back where visitors can play VR in peace.

A monument for resolutions that will never be.

The Spomenik is inspired by the Spomeniks from the Balkan; brutalist monumental architecture, historically commemorating “many different things to many people”. The shape is inspired by Spomeniks such as Tjentiste and Ostra, but does not directly copy their shape but uses these structures as a reference.

This Spomenik is dedicated to resolutions that are impossible, such as ‘screen objects’ (shards) and the not (yet) implemented possibilities non quadrilateral screens have to offer.

This installed shard is three meter high, hiding a VR installation behind, running DCT:SYPHONING. The VR is accessible from the back of the Spomenik. The projection on the Spomenik is partially a mapped live stream from the VR.

The Spomenik also features textures of the Ecology of compression complexities, of which the map was layed out in front.

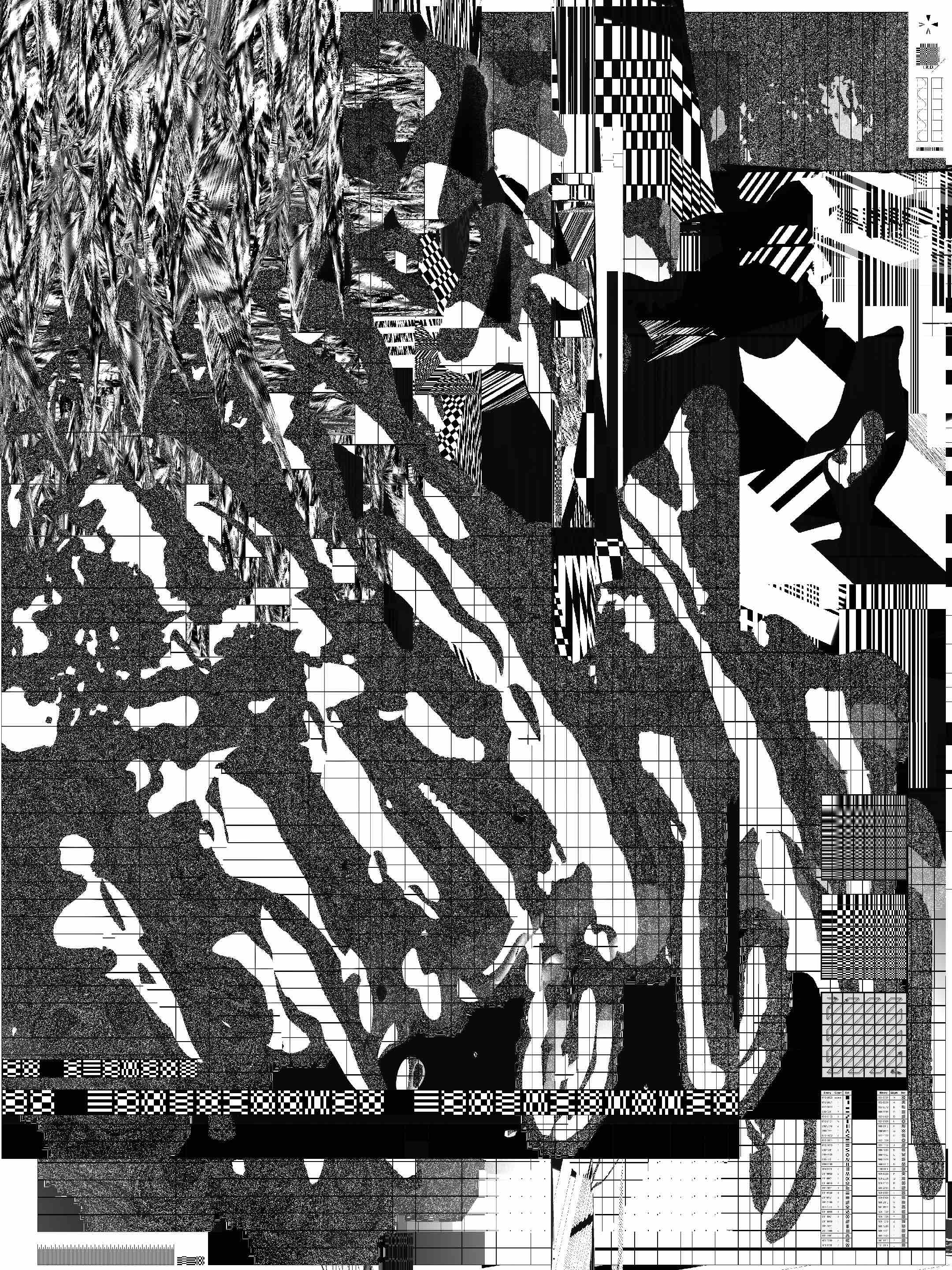

A map of the different complexities of compression artifacts featuring the realms of :

━ Lines

(interlacing, interleaving, scan line, border, beam)

Collapse of PAL, Tacit:Blue, Beyond Resolution performance,

(interlacing, interleaving, scan line, border, beam)

Collapse of PAL, Tacit:Blue, Beyond Resolution performance,

[archeology of DCT]

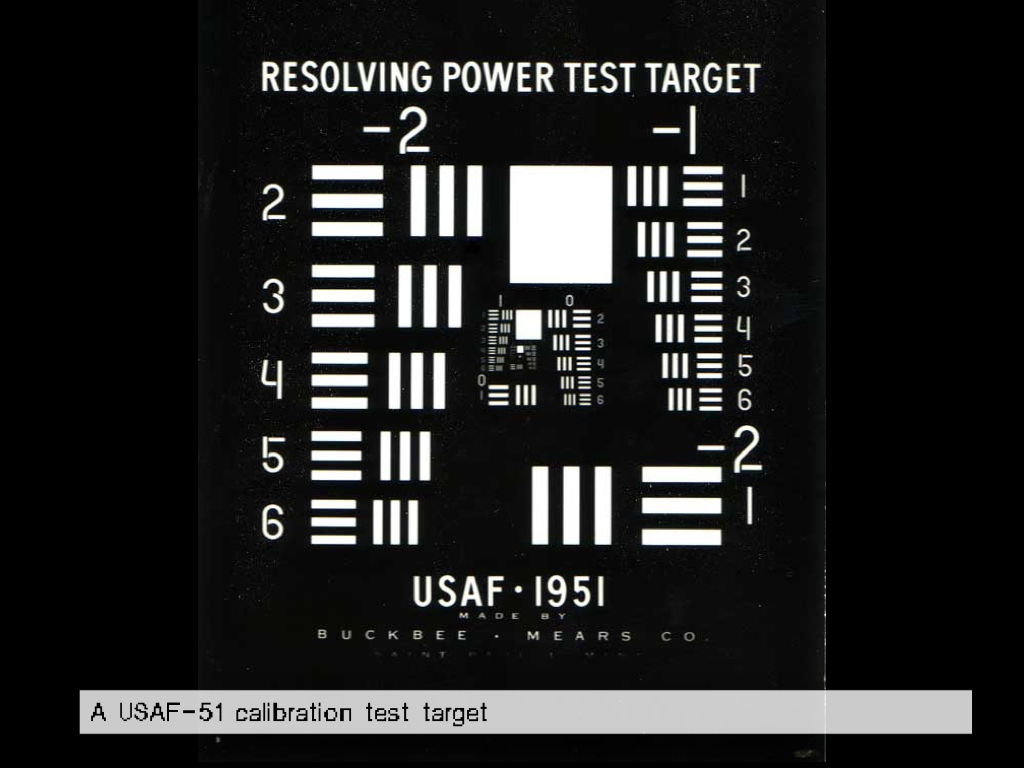

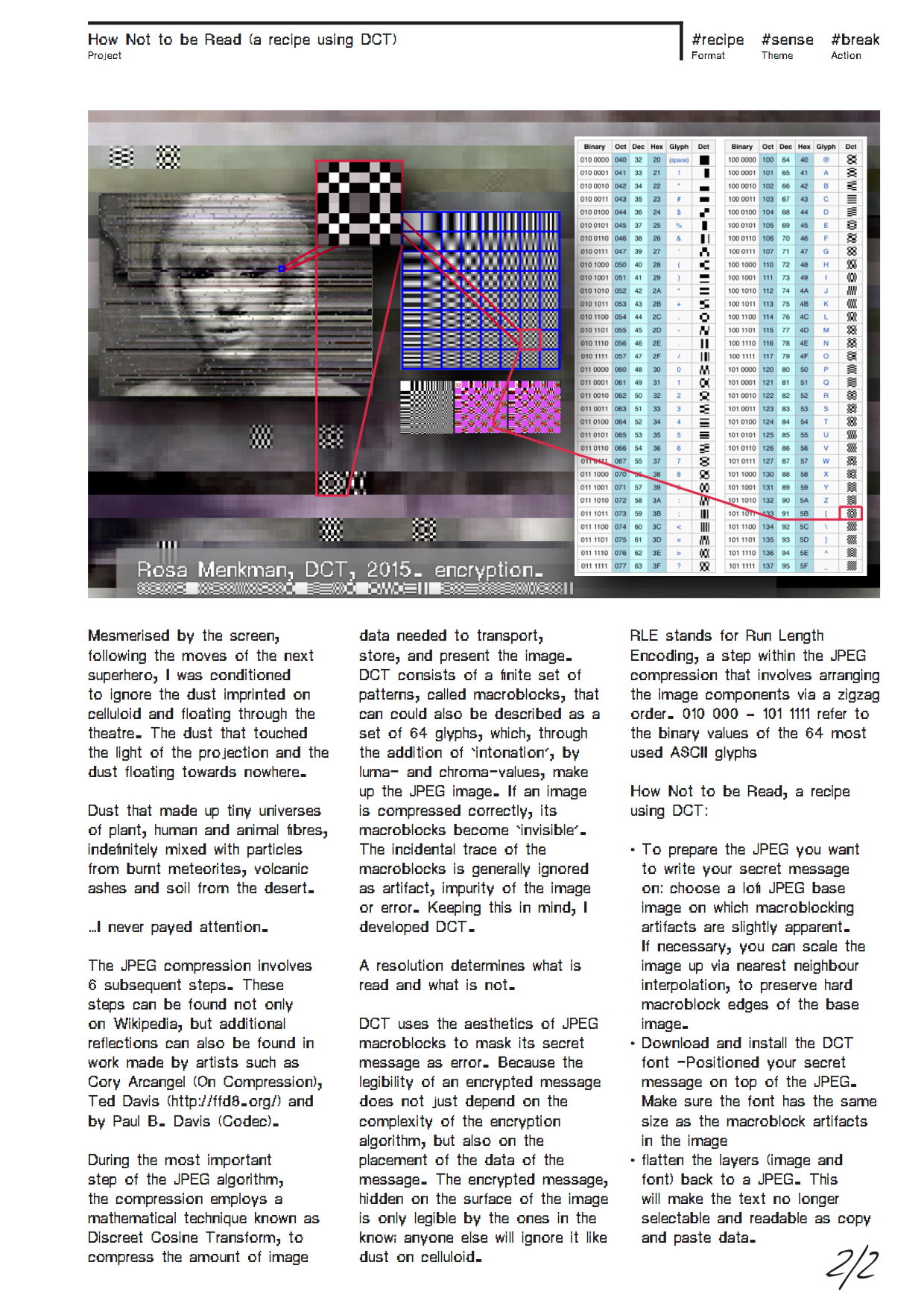

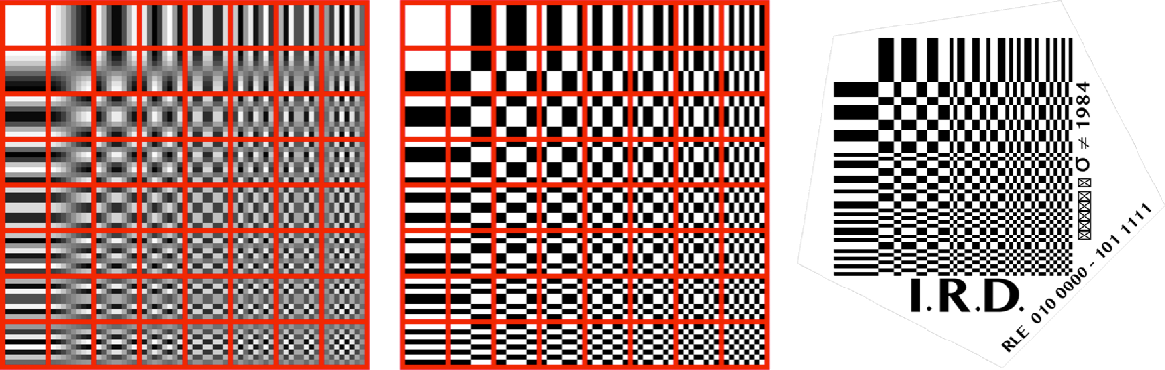

A Discrete Cosine Transform or 64 basis functions of the JPEG compression (Joint Photographic Experts Group) 8 x 8 pixel macroblocks.

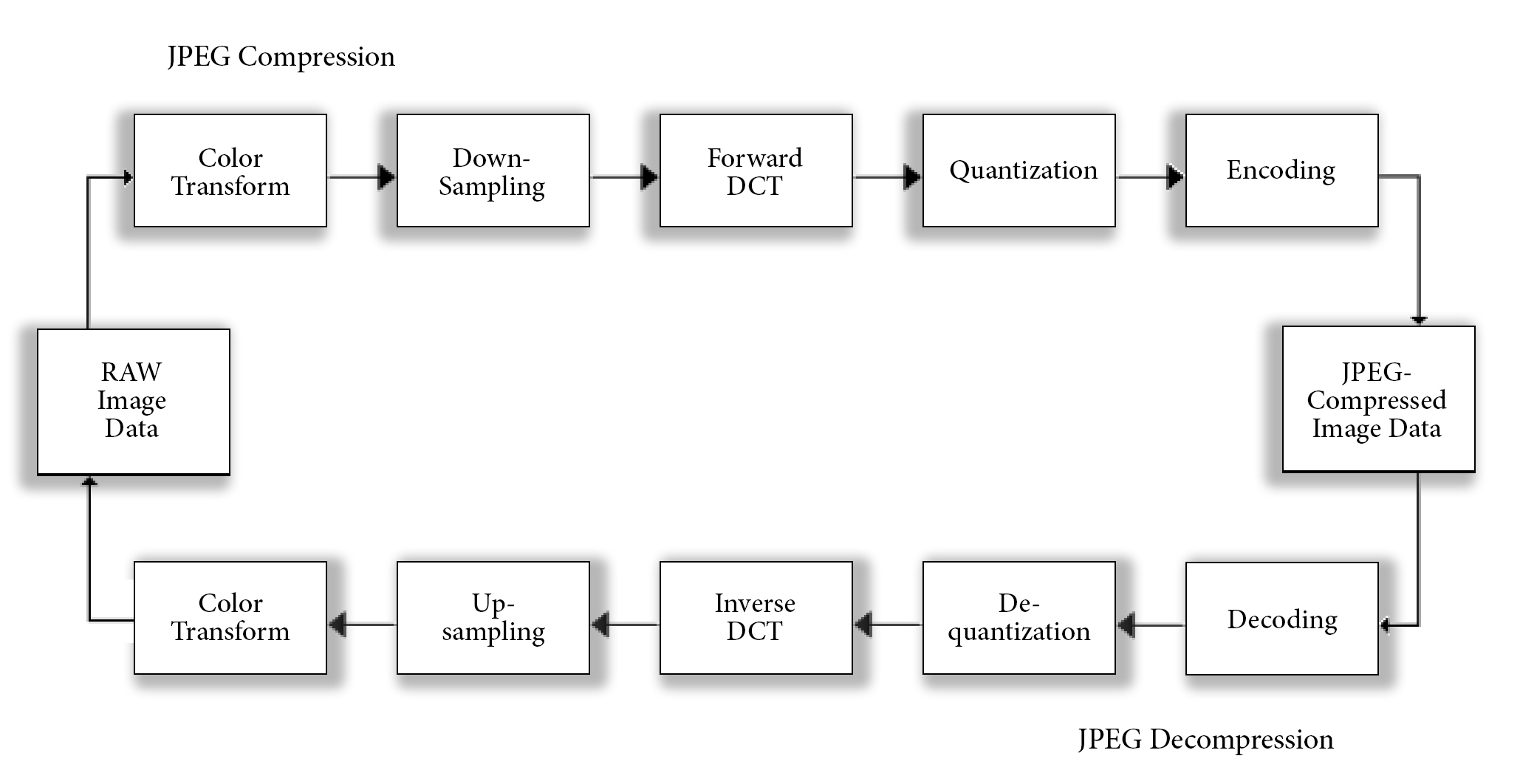

The .JPEG compression consists of these six subsequent steps

The .JPEG compression consists of these six subsequent steps

1. Color space transformation. Initially, the image has to be transformed from the RGB colorspace to

Y′CbCr. This colorspace consists of three components that are handled separately; the Y’ (luma or brightness) and the Cb and Cr values; the blue-difference and red-difference Chroma components.

2. Downsampling. Because the human eye doesn’t perceive small differences within the Cb and Cr space very well, these elements are downsampled, a process that reduces its data dramatically.

3. Block splitting. After the colorspace transformation and downsampling steps, the image is split into 8 x 8 pixel tiles or macroblocks, which are transformed and encoded separately.

4. Discrete Cosine Transform. Every Y’CbCr macroblock is compared to all 64 basis functions (base cosines) of a Discreet Cosine Transform. A value of resemblance per macroblock per base function is saved in a matrix, which goes through a process of reordering.

5. Quantization. The JPEG compression employs quantization, a process that discards coefficients with values that are deemed irrelevant (or too detailed) visual information. The process of quantization is optimized for the human eye, tried and tested on the Caucasian Lena color test card.

Effectively, during the quantization step, the JPEG compression discards most of all information within areas of high frequency changes in color (chrominance) and light (luminance), also known as high contrast areas, while it flattens areas with low frequency (low contrasts) to average values, by re-encoding and deleting these parts of the image data. This is how the rendered image stays visually similar to the original – least to human perception. But while the resulting image may look similar to the original, the JPEG image compression is Lossy, which means that the original image can never be reconstructed.

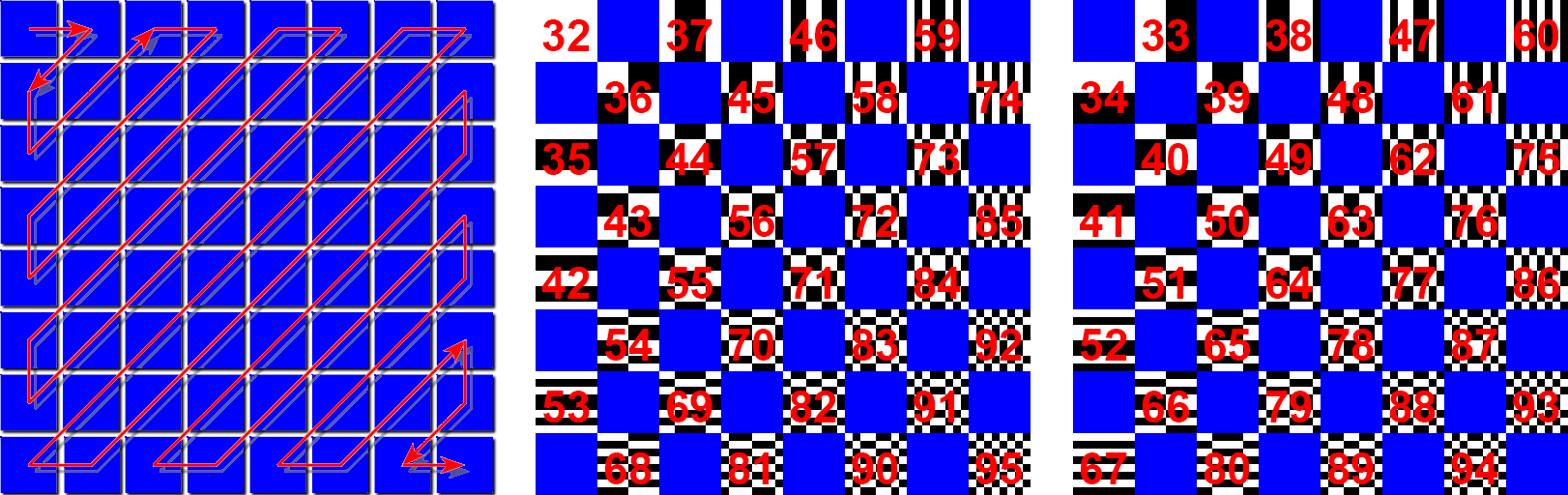

6. Entropy coding. Finally, a special form of lossless compression arranges the macroblocks in a zigzag order. A Run-Length Encoding (RLE) algorithm groups similar frequencies together while Huffman coding organizes what is left.

Revealing the surface and structure of the image *

<href: Ted Davis: ffd8, 2012>

A side effect of the JPEG compression is that the limits of the images’ resolution – which involve not just the images’ number of pixels in length and width, but also the luma and chroma values, stored in the form of 8 x 8 pixel macroblocks – are visible as artifacts when zooming in beyond the resolution of the JPEG.

Because the RGB color values of JPEG images are transcoded into Y’CbCr macroblocks, accidental or random data replacements can result into dramatic discoloration or image displacement. Several types of artifacts can appear; for instance ringing, ghosting, blocking, and staircase artifacts. The relative size of these artifacts demonstrates the limitations of the JPEGs informed data: a highly compressed JPEG will show relatively larger, block-sized artifacts.

▩ [ DCT encryption ]

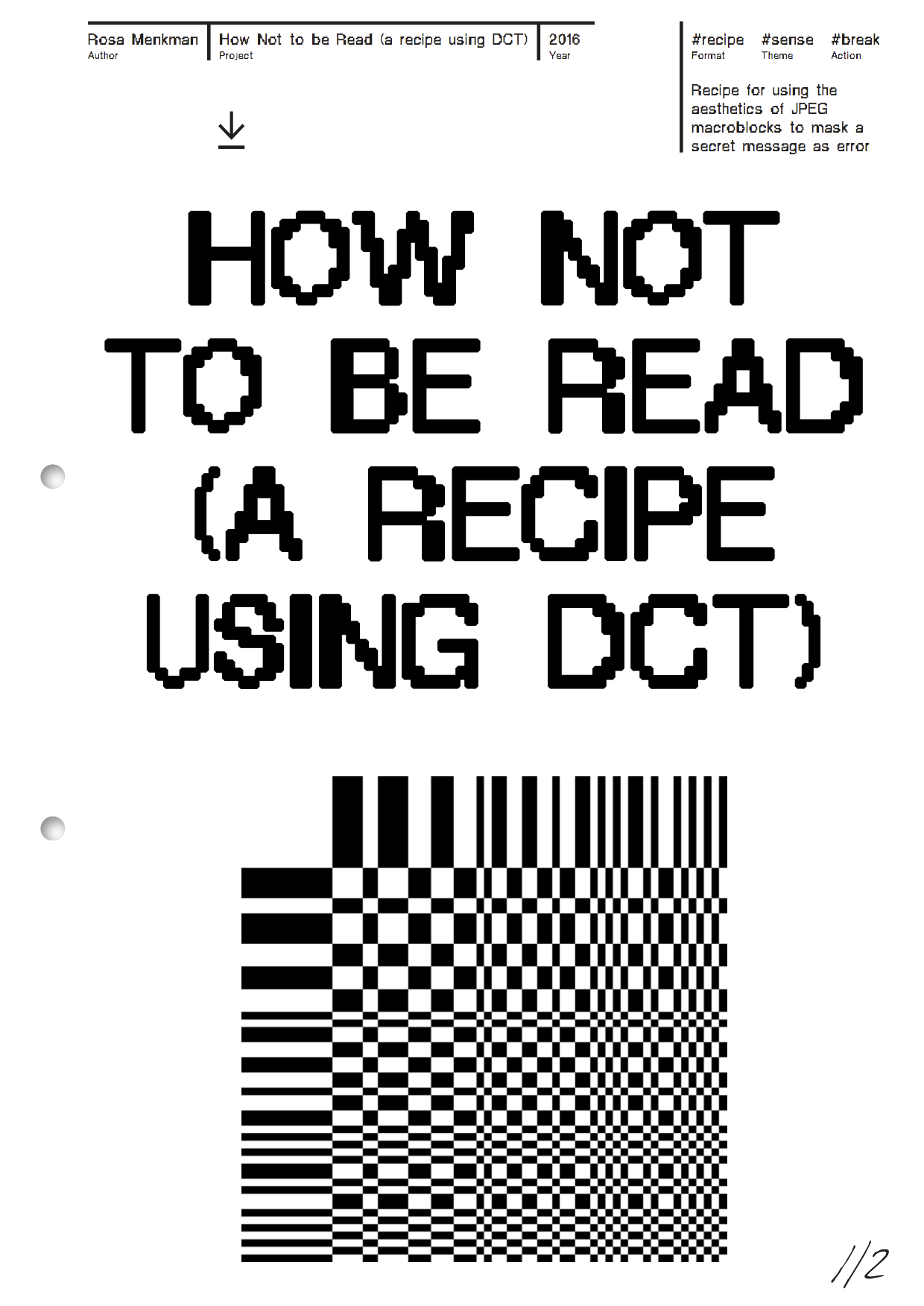

HOW NOT TO BE READ* [ a recipe using DCT ENCRYPTION ]

<href: Hito Steyerl: How Not to Be Seen, 2013>

The legibility of an encrypted message does not just depend on the complexity of the encryption algorithm, but also on the placement of the data of the message. Here they are closely connected to resolutions: resolutions determine what is read and what is unseen or illegible.

DCT ENCRYPTION (2015) uses the aesthetics of JPEG macroblocks to mask its secret messages on the surface of the image, mimicking error. The encrypted message, hidden on the surface is only legible by the ones in the know; anyone else will ignore it like dust on celluloid.

While the JPEG compession consists of 6 steps, the basis of the compression is DCT, or Discrete Cosine Transform. During the final, 6th step of the JPEG compression, entropy coding, a special form of lossless data compression, takes place. Entropy coding involves the arranging the image components in a "zigzag" order, using run-length encoding (RLE) to group similar frequencies together.

How Not to be Read, a recipe using DCT:

︎ for the #3D Additivist Cookbook.

︎ DCT won the Crypto Desgin Challenge Award in 2015.

<href: Hito Steyerl: How Not to Be Seen, 2013>

A PDF with this work is downloadable here

The legibility of an encrypted message does not just depend on the complexity of the encryption algorithm, but also on the placement of the data of the message. Here they are closely connected to resolutions: resolutions determine what is read and what is unseen or illegible.

DCT ENCRYPTION (2015) uses the aesthetics of JPEG macroblocks to mask its secret messages on the surface of the image, mimicking error. The encrypted message, hidden on the surface is only legible by the ones in the know; anyone else will ignore it like dust on celluloid.

While the JPEG compession consists of 6 steps, the basis of the compression is DCT, or Discrete Cosine Transform. During the final, 6th step of the JPEG compression, entropy coding, a special form of lossless data compression, takes place. Entropy coding involves the arranging the image components in a "zigzag" order, using run-length encoding (RLE) to group similar frequencies together.

How Not to be Read, a recipe using DCT:

- Choose a lofi JPEG base image on which macroblocking artifacts are slightly apparent. This JPEG will serve as the image on which your will write your secret message.

- If necessary, you can scale the image up via nearest neighbour interpolation, to preserve hard macroblock edges of the base image.

- Download and install the DCT font

- Position your secret message on top of the JPEG. Make sure the font has the same size as the macroblock artifacts in the image

- Flatten the layers (image and font) back to a JPEG. This will make the text no longer selectable and readable as copy and paste data.

︎ for the #3D Additivist Cookbook.

︎ DCT won the Crypto Desgin Challenge Award in 2015.

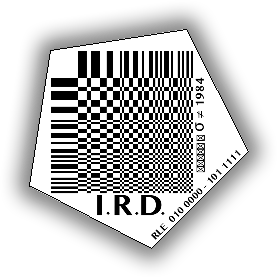

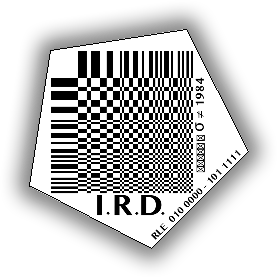

A Discrete Cosine Transform simplified to make a monochrome .ttf font and iRD logo. In the logo RLE 010 000 - 101 1111 signifies the key to the DCT encryption: 010 000 - 101 1111 are the binary values of the 64 most used ASCII glyphs, which are then mapped onto the DCT in a zig zag order (following RLE).

A Discrete Cosine Transform simplified to make a monochrome .ttf font and iRD logo. In the logo RLE 010 000 - 101 1111 signifies the key to the DCT encryption: 010 000 - 101 1111 are the binary values of the 64 most used ASCII glyphs, which are then mapped onto the DCT in a zig zag order (following RLE).

Discrete Cosine Transform (DCT) was first conceived for the Crypto Design Challenge

“A recipe using DCT” was released in the #Additivism cookbook.

Mesmerized by the screen, focusing on the moves of a next superhero, I was conditioned to ignore the dust imprinted on the celluloid or floating around in the theater, touching the light of the projection and mingling itself with the movie.

The dust – micro-universes of plant, human and animal fibers, particles of burnt meteorites, volcanic ashes, and soil from the desert – could have told me stories reaching beyond my imagination, deeper and more complex than what resolved in front of me, reflecting from the movie screen.

… But I never paid attention.

I focused my attention to where I was conditioned to look: to the feature, reflecting from the screen. All I saw were the images. I did not see the physical qualities of the light nor the materials making up its resolution; before, behind, and beyond the screen.

Decennia of conditioning the user to ignore these visual artifacts and to pay attention only to the overall image has changed these artifacts into the ultimate camouflage for secret messaging. Keeping this in mind, I developed DCT.

Premise of DCT is the realisation that the legibility of an encrypted message does not just depend on the complexity of the encryption algorithm, but also on the placement of the message. This encrypted message, hidden on the surface of the image, is only legible by the ones in the know; anyone else will ignore it. Like dust on celluloid, DCT is mimics JPEG error. It appropriates the algorithmic aesthetics of JPEG macroblocks to stenographically mask a secret message, mimicking error. The encrypted message, hidden on the surface of the image, is only legible by the ones in the know.

“A recipe using DCT” was released in the #Additivism cookbook.

Mesmerized by the screen, focusing on the moves of a next superhero, I was conditioned to ignore the dust imprinted on the celluloid or floating around in the theater, touching the light of the projection and mingling itself with the movie.

The dust – micro-universes of plant, human and animal fibers, particles of burnt meteorites, volcanic ashes, and soil from the desert – could have told me stories reaching beyond my imagination, deeper and more complex than what resolved in front of me, reflecting from the movie screen.

… But I never paid attention.

I focused my attention to where I was conditioned to look: to the feature, reflecting from the screen. All I saw were the images. I did not see the physical qualities of the light nor the materials making up its resolution; before, behind, and beyond the screen.

Decennia of conditioning the user to ignore these visual artifacts and to pay attention only to the overall image has changed these artifacts into the ultimate camouflage for secret messaging. Keeping this in mind, I developed DCT.

Premise of DCT is the realisation that the legibility of an encrypted message does not just depend on the complexity of the encryption algorithm, but also on the placement of the message. This encrypted message, hidden on the surface of the image, is only legible by the ones in the know; anyone else will ignore it. Like dust on celluloid, DCT is mimics JPEG error. It appropriates the algorithmic aesthetics of JPEG macroblocks to stenographically mask a secret message, mimicking error. The encrypted message, hidden on the surface of the image, is only legible by the ones in the know.

DCT at W-I-N-D-O-W-S.net an exhibition organised by Jamie Allen and Peter Moosgaard

Making the Sun write cryptographic shadow messages wit DCT.

DCT at MOTI for the Crypto design challenge

DCT at MOTI for the Crypto design challenge encrypted text:

The legibility of an encrypted message does not just depend on the complexity of the encryption algorithm, but also on the placement of the data of the message.

The Discreet Cosine Transform is a mathematical technique. In the case of the JPEG compression, a DCT is used to describe a finite set of patterns, called macroblocks, that could be described as the 64 character making up the JPEG image, adding lumo and chroma values as ‘intonation’.

If an image is compressed correctly, its macroblocks become ‘invisible’. The incidental trace of the macroblocks is generally ignored as artifact or error.

Keeping this in mind, I developed DCT. DCT uses the esthetics of JPEG macroblocks to mask its secret message as error. The encrypted message, hidden on the surface of the image is only legible by the ones in the know.

MARKER OF THE

DE/CALIBRATION TARGET

DCT REPO //

GENEALOGIES

◧⬒◨◧⬓

HOW NOT

TO BE READ.PDF

▣⬓⬒◨◧

DCT ENCRYPTION

STATION

◨◧◧⬓◨

JPEG

▣▣▣

IM/POSSIBLE IMAGES

◧⬒◨⬓◧

THE i.R.D.

DE/CALIBRATION

ARMY

◧⬒◨⬓◧

SHREDDED HOLOGRAM ROSE

◨◧◧⬓◨

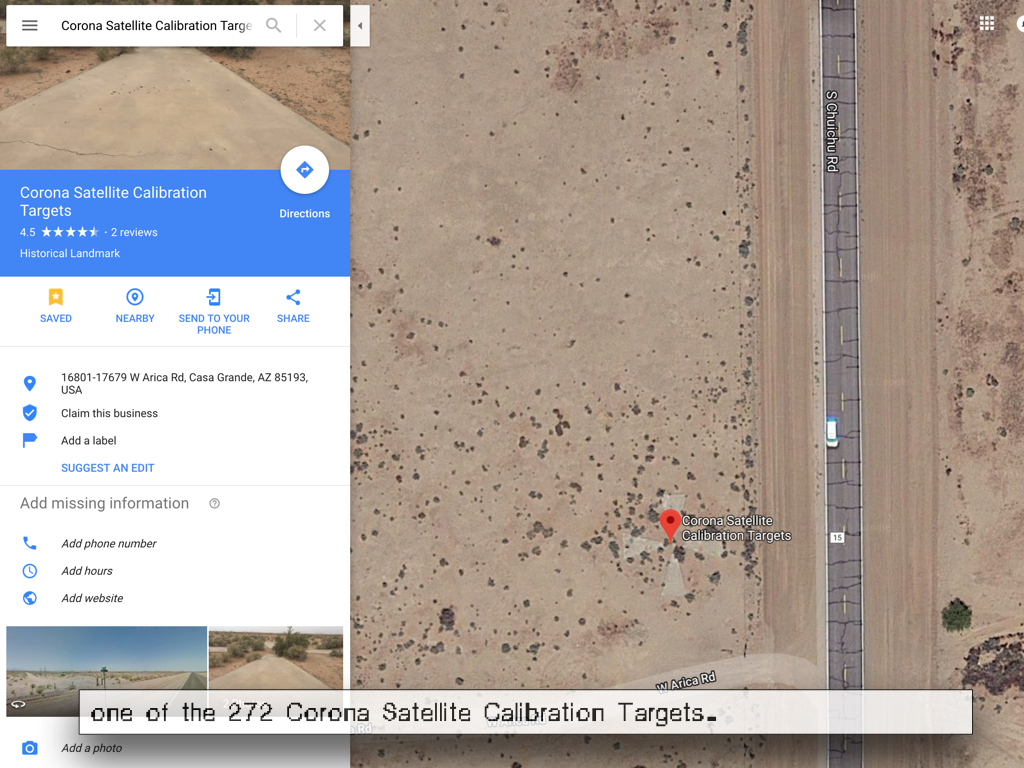

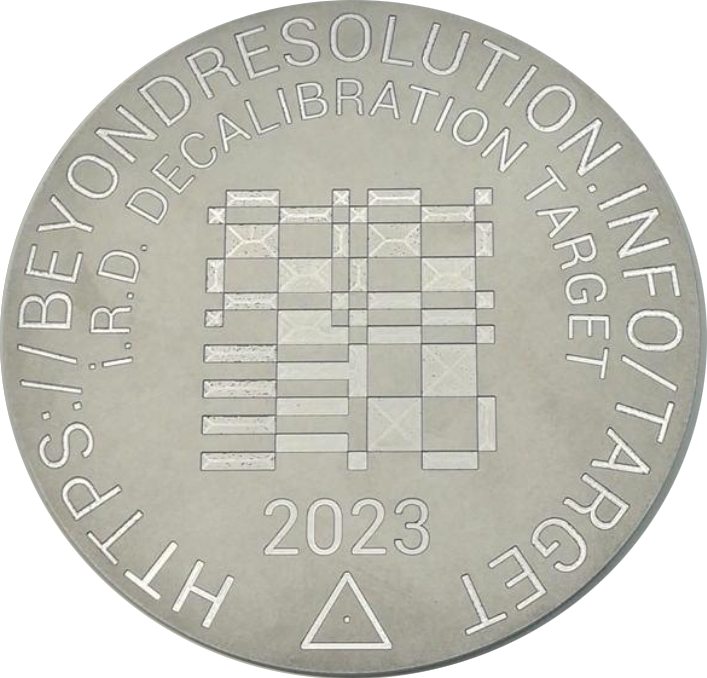

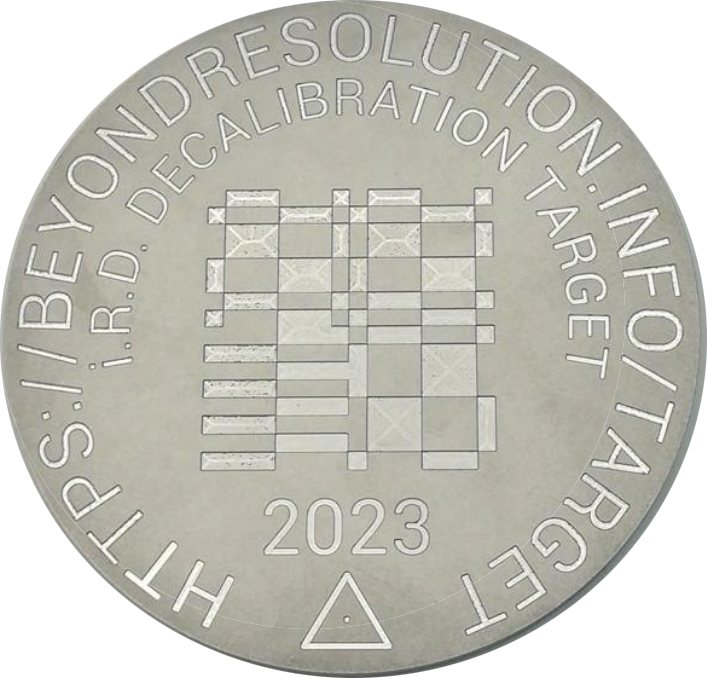

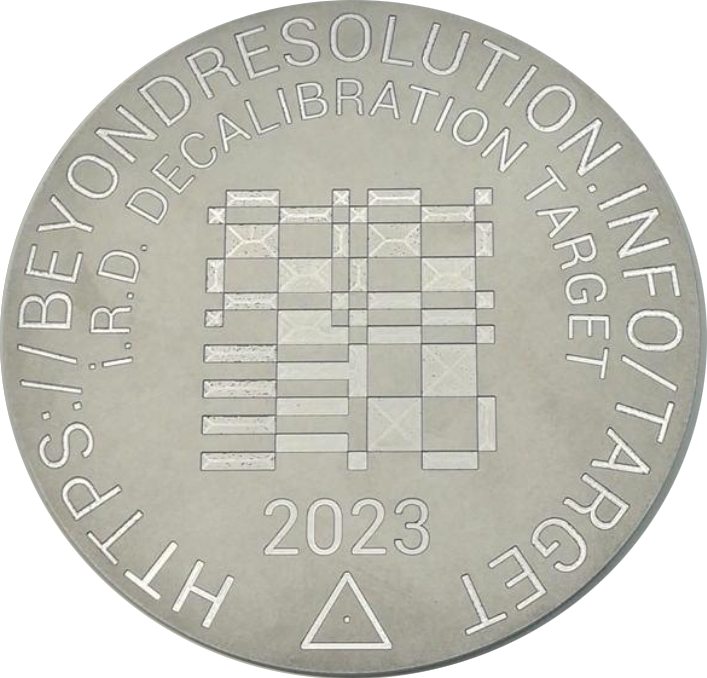

The i.R.D. de/calibration target (2023)

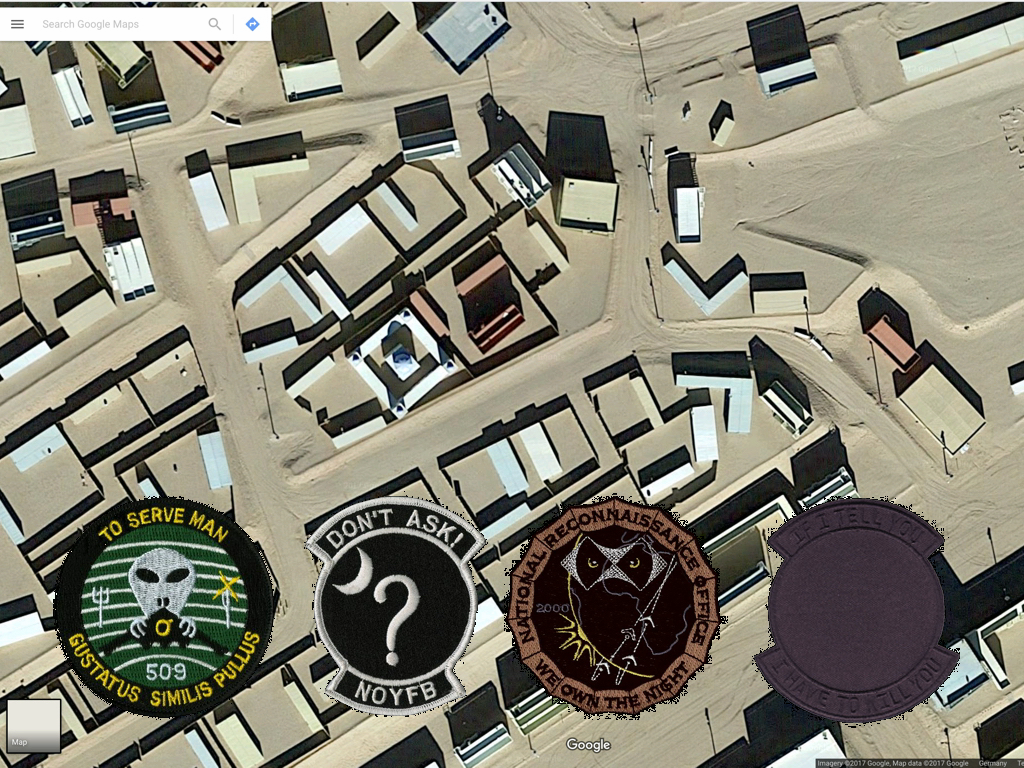

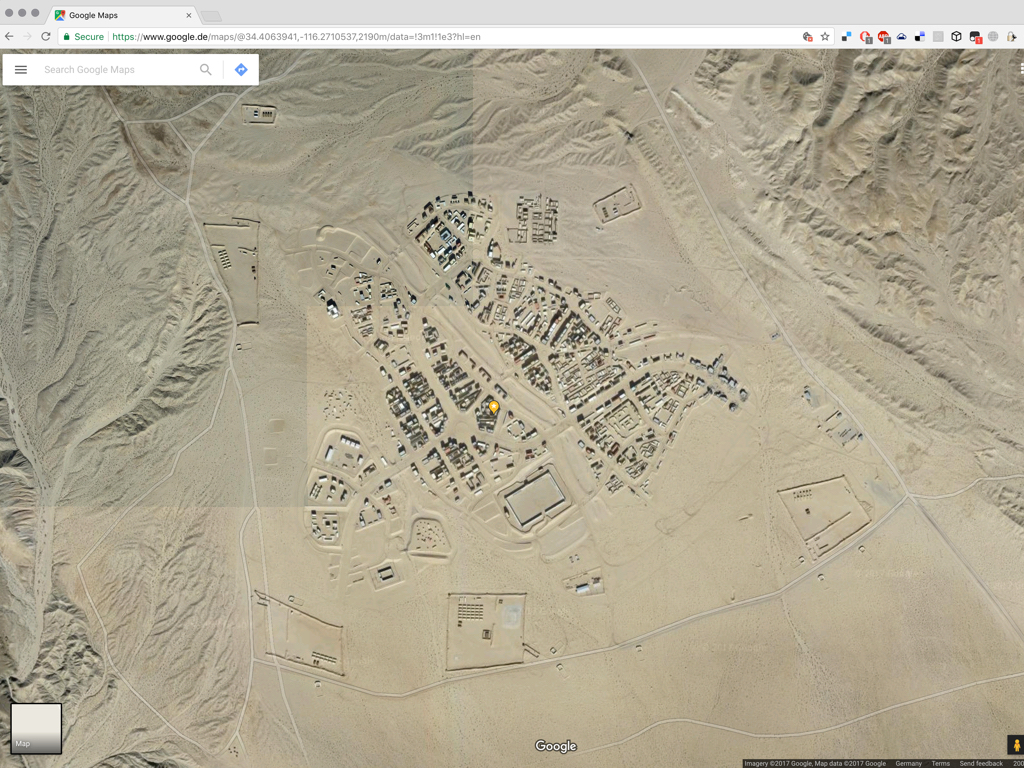

“A blip, a glitch on a satellite photo, an error in the rendering of an image shot by a drone. What might seem a mistake is a freshly painted marker that sits on a rooftop in one of the most historic neighbourhoods of Berlin. Located at the riverside, near the remains of the Berlin wall, the target functions as an AR beacon. On activation offers access to a cache of research about JPEG compression.

This 16 x 16m target can only be read from above, reminding us that resolution, and its recognition, determines what is seen, unseen or illegible. In De/Calibration Target, Rosa Menkman explores what happens when something exists outside our dimensions or system units of scale, and asks what tools are needed to distinguish it from its environment.”

The i.R.D. de/calibration target (which spells FF D8 in DCT, the start of a JPEG image marker) is indexed and seen by satellites, to be distributed and visited online (for now just Bing and Esri world imagery).

The marker makes an appearance in the Shredded Hologram Rose, when a media archeologist from the future visits the marker, which houses the DCT Repo - to find the information on how recalibrate == or de-glitch == the obsolete JPEG layer of a broken AI-Synthesized Hologram Rose.

Commissioned for Out of Scale, the 2023 transmediale exhibition, the De/Calibration Target can only be seen from above through satellite imagery or taken home as a postcard souvenir of this aerial perspective from transmediale warehouse.

Produced by transmediale, with the support of the Stimuleringsfonds Creative Industries NL.

DE/CALIBRATION TARGET

&& DCT REPO

DCT GENEALOGIES

◧⬒◨◧⬓

HOW NOT

TO BE READ.PDF

▣⬓⬒◨◧

DCT ENCRYPTION

STATION

◨◧◧⬓◨

IM/POSSIBLE IMAGES

◧⬒◨⬓◧

THE i.R.D.

DE/CALIBRATION

ARMY

◧⬒◨⬓◧

SHREDDED HOLOGRAM ROSE

◨◧◧⬓◨

CCBYNCSA Laura Fiorio

CCBYNCSA Laura FiorioPerformance of the Shredded Hologram Rose during the Transmediale 2023.

The Shredded Hologram Rose

︎ Published in Angelaki: Shredded Hologram Rose PDF and ePuB

The Shredded Hologram Rose is inspired by "Fragments of a Hologram Rose," a 1977 science fiction short story by William Gibson.

In this short story, a Media Archeologist from the Future - a “100 cycles” in the future - comes home to her Hyper Yves Klein Blue-saturated cube. There, she reflects on her day, which started in misery: she fractured her dear Hologram Rose.

She contemplates the information she gathered during her visit of the DeCalibration Target, where she sourced the i.R.D. information on DCTs, an algorithm that lies at the basis of the now decommissioned JPEG compression, an encoding protocol she will have to understand in order to fix (de-glitch) her beloved Rose.

An earlier short video work was created for the group exhibition Fragments of a Hologram Rose curated by Rick Silva for Feral File. (29 June 2021). The work was extended for IMPAKT Festival: The Curse of Smooth operations and NAC Memory Card festival.

The Shredded Hologram Rose references the DeCalibration target and im/possible images.

Inspired and informed by Alan Warburtons RGBFAQ

T

︎ Published in Angelaki: Shredded Hologram Rose PDF and ePuB

The Shredded Hologram Rose is inspired by "Fragments of a Hologram Rose," a 1977 science fiction short story by William Gibson.

In this short story, a Media Archeologist from the Future - a “100 cycles” in the future - comes home to her Hyper Yves Klein Blue-saturated cube. There, she reflects on her day, which started in misery: she fractured her dear Hologram Rose.

“From the shredded side of a Hologram, one can peek into the 3D objects’ Delta Axis. From this perspective, one can see the Holograms render objects, which form a repository of layered information about the Holograms provenance, metadata, and other information that the unscathed Hologram would never prevail.”

She contemplates the information she gathered during her visit of the DeCalibration Target, where she sourced the i.R.D. information on DCTs, an algorithm that lies at the basis of the now decommissioned JPEG compression, an encoding protocol she will have to understand in order to fix (de-glitch) her beloved Rose.

An earlier short video work was created for the group exhibition Fragments of a Hologram Rose curated by Rick Silva for Feral File. (29 June 2021). The work was extended for IMPAKT Festival: The Curse of Smooth operations and NAC Memory Card festival.

The Shredded Hologram Rose references the DeCalibration target and im/possible images.

Inspired and informed by Alan Warburtons RGBFAQ

T

About Im/Possible Images

The 2010s have been a very interesting decade for the creation and generation of images of reality and beyond. The discovery of the Higgs boson particle (2012) and the capturing of the shadow of a black hole (2019) are two of the most prominent examples of science and imagination colliding, shattering the lines of what was previously understood as universal reality. It's been a decade during which it became common knowledge that standard models need extensions, fields of knowledge continuously scale up and down and AI is used pervasively to predict not only the future, but also to see beyond the unseeable. What was once deemed impossible, has become possible.In 2019 I won the Collide at CERN/Barcelona award with my proposal “Shadow Knowledge”, a project about the fringes of what is enlightened (or: not in the dark). Subsequently, I was awarded a two month residency at CERN, with an additional month in its partnering city of science, Barcelona. In my research proposal, I used the shadow as a metaphor: “In the shadows, things lack definition. The shadows offer shady outlines that can function either as a vector of progress or as a paint by numbers.” I proposed research about what can just be seen or sensed, and about how these new and other techniques can be deployed to derive knowledge about objects of unsupported scale and dimension.

In September that year I finally made it to the largest experiment in the world. But upon arrival at CERN I quickly encountered multiple linguistic clashes. Where I, as an artist, like to use poetic phrases such as Shadow Knowledge, communicating this way with members of the scientific community remained difficult. Our use of language did not jive. Pretty quickly, it became clear I had to cross over to a scientific use of language and find a way to communicate that made sense to the scientists there. A challenge compounded due to differences in language between scientific disciplines, methods, and even experiments.

To consider this challenge I sat down with my two scientific partners: Jeremi Niedziela and Rolf Landua.

With their generous help I reframed my research into a single question:

Imagine you could obtain an 'impossible' image

of any object or phenomenon that you think is important,

with no limits on spatial, temporal, energy, signal/noise or cost resolutions.

What image would you create?

(The answer can be a hypothetical image of course!)

During the three months of my residency I asked every scientist I met this question. Not only for them to consider what images are possible or impossible, but also to probe and isolate the mechanics at work whenever a certain type of image (or rendering) is or becomes ‘impossible,’ or similarly, when a method of rendering gets compromised (deleted, discarded or obsolete). This new method of inquiry worked really well; I rapidly collected countless im/possible images in dialogues with scientists, via email, and through an online form set up for this purpose.

The BLOB of Im/Possible Images // Im/Possible 图像的 BLOB

During the three month CERN Collide and Barcelona residency I asked every scientist I met this question:

Imagine you could obtain an 'impossible' image

of any object or phenomenon that you think is important,

with no limits on spatial, temporal, energy, signal/noise or cost resolutions.

What image would you create?

(The answer can be a hypothetical image of course!)

The collection of "im/possible" images I gathered, reveal both the limitations and possibilities of human imagination and understanding. I compiled the collection into a virtual exhibition named the BLOB (Binary Large OBject) of im/possible images, which offers an immersive environment for visitors to explore and reflect on the nature of imaging. The BLOB not only celebrates the potentialities of images but also emphasizes the constraints and compromises inherent in current image processing technologies.

Imagine you could obtain an 'impossible' image

of any object or phenomenon that you think is important,

with no limits on spatial, temporal, energy, signal/noise or cost resolutions.

What image would you create?

(The answer can be a hypothetical image of course!)

The collection of "im/possible" images I gathered, reveal both the limitations and possibilities of human imagination and understanding. I compiled the collection into a virtual exhibition named the BLOB (Binary Large OBject) of im/possible images, which offers an immersive environment for visitors to explore and reflect on the nature of imaging. The BLOB not only celebrates the potentialities of images but also emphasizes the constraints and compromises inherent in current image processing technologies.

Background story to the BLOB at the Lothringer 13 Halle.

My collection of images displayed the diverse limits of image processing technologies in existence. I learned that some images are currently impossible due to certain restraints (e.g. money or knowledge), while others will remain impossible due to the laws of physics and the universe itself. As such they will forever exist in the hypothetical realms of our imagination.

Using the virtual exhibition toolkit New Art City, I installed a selection of my collection of im/ possible images in a low poly rendition that I named the BLOB of im/possible images. In front of the BLOB (a Binary Large OBject), the visitor is welcomed by the Angel of History who points at the BLOB, urging visitors to enter it. A map reminiscing the realms of Compression Complexities is installed next to her, while behind her the Staircase to Nowhere spirals Northward.

The BLOB was built to give a metaphoric ‘shape’ to the space of all images, past and future. Different Axes of Affordance cut the BLOB. These parameters describe the mechanics that define what is resolved and as a result, what is compromised, or in other words, will not be rendered. While in reality, the space of all im/possible images is fragmented, organized by actions and affordances, stacked by the history of image processing technologies, and consolidated in resolutions, the BLOB offers a space where imaginary propositions are possible. In the BLOB visitors can look and think through images as fluid, released from the otherwise rigid settings, resolutions, affordances and compromises.

Visitors can enter the BLOB through a dark round portal, imaging a Shadow of Dark Matter. Upon entering, they are confronted with A Pale Blue Dot that shines from the depths of the universe, while on the right, The Quantum Vacuum at a Slice of Planck Constant Time flickers rapidly. Right next to it, an image of the insides of a proton shows 3 quarks, the tiniest known building blocks of the universe. Normally, these images are impossible, but the hypothetical realms of the BLOB offer pasture to these im/possible renders.

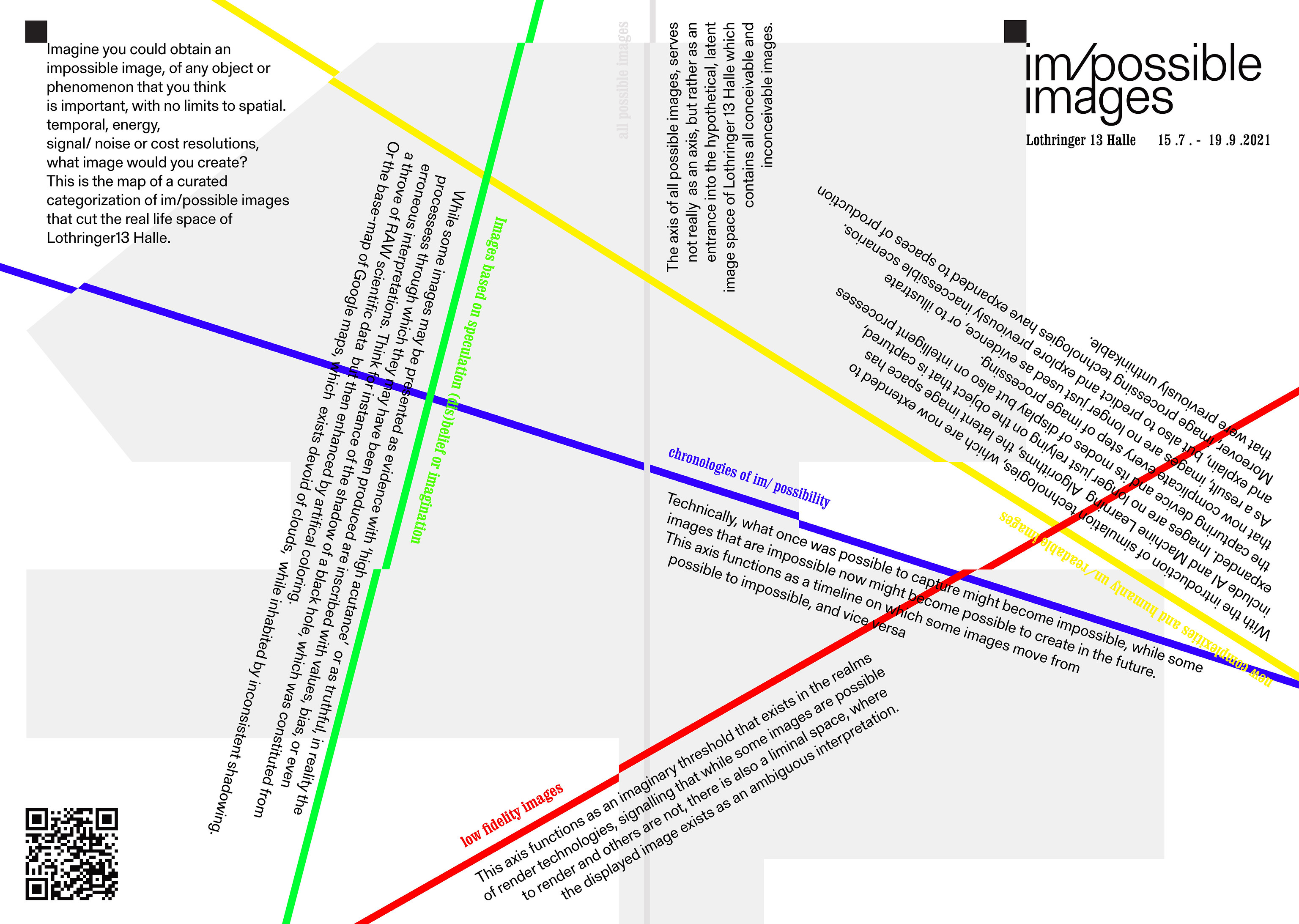

In the summer of 2021, I was invited by Luzi Gross to conceptualize and curate a physical exhibition of im/possible images at the Lothringer 13 Halle (München). The exhibition was an opportunity to transform the Lothringer into a real space actualisation of the virtual BLOB. In the space of the Lothringer five categories of im/possibility were introduced as parameters or axes of affordances. These axes materialized as architectural elements that physically cut through the rooms of the exhibition halls. But we stopped short of calling the Lothringer a BLOB, instead calling the exhibition the latent space of im/possible images.

In analogue photography, the latent image space exists when photosensitive material has been exposed, but has not gone through a process of development (yet). It is the space that has been touched by light, but that is not showing trace evidence (in the form of an image). The im /possible images show used the term ‘latent image’ as an expanded concept, referring to a hypothetical space that exists before exposure and therefore can still develop every imaginable or unimaginable image.

The main premise of im/possible images is that once any action or decision in the procedure of image processing is initiated, a render parameter is chosen. Following this decision, the parameter operates as a metaphoric cut through the space of everything that can be rendered, dividing it up into images that remain possible, and images that, as a consequence of the decision, are impossible. Effectively, every render setting realizes particular images, while also compromising and complicating others.

In preparation for the show at Lothringer, I went back to my collection of im/possible images. But this time, I did this not to collect images to show, but rather lifted out certain categories I wanted to illustrate via work based in artistic research (as opposed to the mostly scientific illustrations featured in the BLOB).

[ white axis ] all possible images

works:

︎Fabian Heller, All possible images [True Color, Full HD], Print on PVC, 18x2 metres, 2021.

The white axis, the axis of all possible images, starts outside the gallery and serves as the entrance into the hypothetical latent image space of Lothringer 13 Halle. The axis features two works; the BLOB of im/possible images and All Possible Images [True Color, Full HD] (2021). The latter, All Possible Images, is an entirely new, commissioned work by Fabian Heller. In All Possible Images, Heller explores the absolute maximal, finite number of possible renders an HD computer screen can display. Given enough time (and screens to render on), any sequence of pixels - or ways to fill up an HD display - will eventually occur somewhere at some moment in the world. However, the number of images that a FullHD screen can render is immense. It stretches beyond understanding at a human scale - and ushers in a scenario reminiscent of Borges’ Total Library and Émile Borels infinite monkey theorem. In an essay in the im/possible images reader Heller therefore concludes that All Possible Images comes with its own impossibilities.

works:

︎Rosa Menkman, BLOB of Im/Possible Images, 3D word, 2021.

The white axis, the axis of all possible images, starts outside the gallery and serves as the entrance into the hypothetical latent image space of Lothringer 13 Halle. The axis features two works; the BLOB of im/possible images and All Possible Images [True Color, Full HD] (2021). The latter, All Possible Images, is an entirely new, commissioned work by Fabian Heller. In All Possible Images, Heller explores the absolute maximal, finite number of possible renders an HD computer screen can display. Given enough time (and screens to render on), any sequence of pixels - or ways to fill up an HD display - will eventually occur somewhere at some moment in the world. However, the number of images that a FullHD screen can render is immense. It stretches beyond understanding at a human scale - and ushers in a scenario reminiscent of Borges’ Total Library and Émile Borels infinite monkey theorem. In an essay in the im/possible images reader Heller therefore concludes that All Possible Images comes with its own impossibilities.

[ red axis ] low fidelity images

works:

︎UCNV, Supercritical, Video installation, 2019.

︎Peter Edwards, Nova Drone, Interactive installation, 2012.

30 meters onwards, the white axis intersects with a red axis named the axis of low fidelity images. This red axis functions as an abstract threshold that exists in the realms of digital rendering technologies, beyond the simple binaries otherwise used to understand whether an image can or can not be rendered and features works by UCNV and Peter Edwards.

Images can be amalgamated rather than consolidated. This means that while image data might be static, the final image render is entirely dependent on the discrete steps of the (lofi) image render pipeline. The works exhibited along this red axis illustrate this. They are examples of images existing as non-static formations, or objects of ambiguous technical interpretation, that explore how technological affordances relate to medium specificity, limitation and erroneous consolidation. For instance, Edwards audio visual synthesizer, the Nova Drone, centers around a LED pulsating at high frequency, translated as image-information by the CCD chip (light capturing chip) that exists in most cell phones. Due to the mis-match in frequency between the light pulse of the LED in the Nova Drone and the speed at which the chip of the phone camera collects and writes away the light, a questionable interpretation appears in the memory - the photo roll - of the phone. Whereas Edwards shows how a mismatch in time resolution by our devices can resolve into beautiful, but erroneous, representations, UCNV illustrates how mechanics play out at the level of pixel resolution. His work Supercritical, also represented in an essay in this reader, demonstrates how changes in quantitative pixel resolution result in ambiguous, fluid data morphing. A mechanism that is taken one step further in Daniel Temkin’s contribution to this reader, a how-to guide titled “Unprintables”, which is a manual for creative quantitative pixel resolution mis-use.

works:

︎UCNV, Supercritical, Video installation, 2019.

︎Peter Edwards, Nova Drone, Interactive installation, 2012.

30 meters onwards, the white axis intersects with a red axis named the axis of low fidelity images. This red axis functions as an abstract threshold that exists in the realms of digital rendering technologies, beyond the simple binaries otherwise used to understand whether an image can or can not be rendered and features works by UCNV and Peter Edwards.

Images can be amalgamated rather than consolidated. This means that while image data might be static, the final image render is entirely dependent on the discrete steps of the (lofi) image render pipeline. The works exhibited along this red axis illustrate this. They are examples of images existing as non-static formations, or objects of ambiguous technical interpretation, that explore how technological affordances relate to medium specificity, limitation and erroneous consolidation. For instance, Edwards audio visual synthesizer, the Nova Drone, centers around a LED pulsating at high frequency, translated as image-information by the CCD chip (light capturing chip) that exists in most cell phones. Due to the mis-match in frequency between the light pulse of the LED in the Nova Drone and the speed at which the chip of the phone camera collects and writes away the light, a questionable interpretation appears in the memory - the photo roll - of the phone. Whereas Edwards shows how a mismatch in time resolution by our devices can resolve into beautiful, but erroneous, representations, UCNV illustrates how mechanics play out at the level of pixel resolution. His work Supercritical, also represented in an essay in this reader, demonstrates how changes in quantitative pixel resolution result in ambiguous, fluid data morphing. A mechanism that is taken one step further in Daniel Temkin’s contribution to this reader, a how-to guide titled “Unprintables”, which is a manual for creative quantitative pixel resolution mis-use.

[ green axis ] images based on speculation, dis/belief or imagination

works:

︎Susan Schuppli, Can the sun lie? Video, 2014–2015

︎Sasha Engelmann & Sophie Dyer, Open Weather, Installation & Workshop, 2020-2021

︎Rosa Menkman, Whiteout, video (15 min.), 2020

︎Quote by Ingrid Burrington "forever noon on a cloudless day"

︎NASA basemap of Earth.

A green axis titled images based on speculation, dis/belief or imagination runs almost perpendicular to the red axis. The works installed along this axis show the complex dynamic in images that are presented as evidentiary, truthful or scientific. They illustrate that all processes of image sourcing inevitably inscribe them with values, bias or even faulty interpretations.

One evocative example in the exhibition is the basemap of planet Earth. A map completely devoid of clouds, inhabited by inconsistent shadowing and a blanketing noon time zone - or as Ingrid Burrington rightfully states: on the basemap of planet Earth, it is “forever noon on a cloudless day.” In this reader there are three texts accompanying the green axis. First, a reprint of Susan Schuppli’s formidable essay Can the Sun Lie (2014-2015), in which she shares a striking account of how a system of belief can thwart scientific inquiry. Secondly, the manual for the open weather workshop that was used during the im/possible summer school at the Lothringer. With the help of this manual, one can listen to the NOAA satellites and decode their radio transmission to create a local weather report that goes beyond values of precipitation and temperature, but highlights under-represented values like smog, light or other environmental conditions (of pollution). The axis concludes with “Whiteout”, in which I tell the story of an exhausting mountain hike during a snowstorm and the experience of the loss of my physical sensations - leading to an inability to see, hear, or orient myself. While the spatial dimensions are at first seemingly wiped out, an experience of oversaturation starts to offer the environment to me in new, different imagery ways.

works:

︎Susan Schuppli, Can the sun lie? Video, 2014–2015

︎Sasha Engelmann & Sophie Dyer, Open Weather, Installation & Workshop, 2020-2021

︎Rosa Menkman, Whiteout, video (15 min.), 2020

︎Quote by Ingrid Burrington "forever noon on a cloudless day"

︎NASA basemap of Earth.

A green axis titled images based on speculation, dis/belief or imagination runs almost perpendicular to the red axis. The works installed along this axis show the complex dynamic in images that are presented as evidentiary, truthful or scientific. They illustrate that all processes of image sourcing inevitably inscribe them with values, bias or even faulty interpretations.

One evocative example in the exhibition is the basemap of planet Earth. A map completely devoid of clouds, inhabited by inconsistent shadowing and a blanketing noon time zone - or as Ingrid Burrington rightfully states: on the basemap of planet Earth, it is “forever noon on a cloudless day.” In this reader there are three texts accompanying the green axis. First, a reprint of Susan Schuppli’s formidable essay Can the Sun Lie (2014-2015), in which she shares a striking account of how a system of belief can thwart scientific inquiry. Secondly, the manual for the open weather workshop that was used during the im/possible summer school at the Lothringer. With the help of this manual, one can listen to the NOAA satellites and decode their radio transmission to create a local weather report that goes beyond values of precipitation and temperature, but highlights under-represented values like smog, light or other environmental conditions (of pollution). The axis concludes with “Whiteout”, in which I tell the story of an exhausting mountain hike during a snowstorm and the experience of the loss of my physical sensations - leading to an inability to see, hear, or orient myself. While the spatial dimensions are at first seemingly wiped out, an experience of oversaturation starts to offer the environment to me in new, different imagery ways.

documentation:

︎Röntgen, Röntgen photo, print, 1896

︎NASA Voyager / Carl Sagan, Pale blue dot, 1990

︎Medipix, spectroscopic X-ray 2018

[ yellow axis ] new complexities and humanly un/readable images

works:

︎Alan Warburton, RGB FAQ, Video essay (27:38 min), 2020

︎Memo Akten, Learning to See: Gloomy Sunday, HD Video (3:02 min), 2017

︎Rosa Menkman, Shredded Hologram Rose (4:30 min) 2021

Finally, the yellow axis presents new complexities and humanly un/readable images. This axis focuses on new layers of image processing, added since the advent of AI image generation. During the exhibition in the Lothringer Alan Warburtons video essay RGBFAQ, of which the text is reproduced in this reader, was seen in the basement. In RGBFAQ, Warburton asks what the computational image is? Completing the axis are Memo Aktens’ Learning to See (2017) and The Shredded Hologram Rose (Rosa Menkman, 2021).

works:

︎Alan Warburton, RGB FAQ, Video essay (27:38 min), 2020

︎Memo Akten, Learning to See: Gloomy Sunday, HD Video (3:02 min), 2017

︎Rosa Menkman, Shredded Hologram Rose (4:30 min) 2021

Finally, the yellow axis presents new complexities and humanly un/readable images. This axis focuses on new layers of image processing, added since the advent of AI image generation. During the exhibition in the Lothringer Alan Warburtons video essay RGBFAQ, of which the text is reproduced in this reader, was seen in the basement. In RGBFAQ, Warburton asks what the computational image is? Completing the axis are Memo Aktens’ Learning to See (2017) and The Shredded Hologram Rose (Rosa Menkman, 2021).

The Im/Possible Images Reader

I initiated my - still in progress - research on im/possible images with a single question: Imagine you could obtain an 'impossible' image of any object or phenomenon that you think is important, with no limits on spatial, temporal, energy, signal/noise or cost resolutions. What image would you create? (the answer can be a hypothetical image of course!)

The Im/Possible Images Reader represents a moment in my journey to reflect on my progress and constitutes a collection of texts that range from short stories, to image work, manuals, (technical) documentation and essays that I consider to constitute valuable responses to this question. Some of these materials were specifically written for this reader, whereas others have been generously allowed to be reprinted.

The organization of this publication is modular; the chapters can be read independently. Despite this, we chose the order in which we present them for a reason: to provide a consistently additive flow that builds outwards from the five axes of the exhibition.

In conclusion, I would like to stress that after releasing this im /possible images reader, im /possible images will remain an active research platform, which can be visited here:

www.beyondresolution.info/impossible

The Im/Possible Images Reader represents a moment in my journey to reflect on my progress and constitutes a collection of texts that range from short stories, to image work, manuals, (technical) documentation and essays that I consider to constitute valuable responses to this question. Some of these materials were specifically written for this reader, whereas others have been generously allowed to be reprinted.

The organization of this publication is modular; the chapters can be read independently. Despite this, we chose the order in which we present them for a reason: to provide a consistently additive flow that builds outwards from the five axes of the exhibition.

In conclusion, I would like to stress that after releasing this im /possible images reader, im /possible images will remain an active research platform, which can be visited here:

www.beyondresolution.info/impossible

Part of the research for this reader was conducted in the framework of the Alex Adriaansens Residency at V2_Lab for the Unstable Media. Thanks to director Michel van Dartel and curator Florian Weigl.

Part of the research for this reader was conducted in the framework of the Alex Adriaansens Residency at V2_Lab for the Unstable Media. Thanks to director Michel van Dartel and curator Florian Weigl.MATERIALS

︎Rosa Menkman & Luzi Gross – im/possible images introduction and exhibition documentation

︎Fabian Heller – All Possible Images

︎UCNV – Into Supercritical

︎Daniel Temkin – Unprintables

︎OPEN WEATHER (Sasha Engelmann & Sophie Dyer) – The Im/Possible Weather Station & Workshop

︎Susan Schuppli – Can the Sun Lie?

︎Rosa Menkman – Whiteout

︎Alan Warburton – RGBFAQ

︎Klasse Digitale Grafik HfBK Hamburg – projects on im/possible images

︎Fabian Heller – All Possible Images

︎UCNV – Into Supercritical

︎Daniel Temkin – Unprintables

︎OPEN WEATHER (Sasha Engelmann & Sophie Dyer) – The Im/Possible Weather Station & Workshop

︎Susan Schuppli – Can the Sun Lie?

︎Rosa Menkman – Whiteout

︎Alan Warburton – RGBFAQ

︎Klasse Digitale Grafik HfBK Hamburg – projects on im/possible images

Whiteout (2020)

︎ read: PDF Essay as published by Photoresearcher

Whiteout is based on an essay of the same title, originally written for AX15 (see below)

The sound starting from around minute 9 is from a live performance recording by Mario de Vega.

The stroboscopic images are all from the slides of the 2 year colloquium “Resolution Studies”

Whiteout is inspired by my time during the CERN Collide, our climb of the Brocken and a voyage on board of a ship of the Chilean army to Antartica.

In Whiteout, I tell the story of an exhausting hike during a snowstorm. As we makes our way up the mountain, I experience the loss of physical sensations - leading to an inability to see, hear, or orient myself. While at first I knew what direction I was going, now, the spatial dimensions are wiped out. I experience oversaturation by nothing - as the environment starts to offer itself in new ways.

I ask myself, what does it mean, to move without visual or auditory references

or to physically plot a course when there is no conventional sense of direction or even horizon?

To feel oversaturated, by a lack of anything? What new ways of sensing can be found?

︎ ARTFORUM write up: ArtForum, December ‘21, page 207

Whiteout is based on an essay of the same title, originally written for AX15 (see below)

The sound starting from around minute 9 is from a live performance recording by Mario de Vega.

The stroboscopic images are all from the slides of the 2 year colloquium “Resolution Studies”

Whiteout is inspired by my time during the CERN Collide, our climb of the Brocken and a voyage on board of a ship of the Chilean army to Antartica.

In Whiteout, I tell the story of an exhausting hike during a snowstorm. As we makes our way up the mountain, I experience the loss of physical sensations - leading to an inability to see, hear, or orient myself. While at first I knew what direction I was going, now, the spatial dimensions are wiped out. I experience oversaturation by nothing - as the environment starts to offer itself in new ways.

I ask myself, what does it mean, to move without visual or auditory references

or to physically plot a course when there is no conventional sense of direction or even horizon?

To feel oversaturated, by a lack of anything? What new ways of sensing can be found?

︎ ARTFORUM write up: ArtForum, December ‘21, page 207

Cyclops Retina

In collaboration with Kimchi and Chips.

Commissioned Install for Thin Air @The Beams, on till the 4th of June, London, UK.

In Cyclops Retina, Kimchi and Chips and Rosa Menkman combine their contrasting research into light as both a material and neurological phenomena to create an experimental video essay presented in an experimental format. The specially commissioned narrative written and narrated by Menkman takes us on her journey into the cave of a cyclops so that she might learn how to see into the future. This journey is illustrated by millions of beams of light which are merged in the haze to create floating graphics in the air. The installation and narrative delve into unconventional modes of vision, pushing boundaries and exploring new ways of experiencing light and perception.

Commissioned Install for Thin Air @The Beams, on till the 4th of June, London, UK.

In Cyclops Retina, Kimchi and Chips and Rosa Menkman combine their contrasting research into light as both a material and neurological phenomena to create an experimental video essay presented in an experimental format. The specially commissioned narrative written and narrated by Menkman takes us on her journey into the cave of a cyclops so that she might learn how to see into the future. This journey is illustrated by millions of beams of light which are merged in the haze to create floating graphics in the air. The installation and narrative delve into unconventional modes of vision, pushing boundaries and exploring new ways of experiencing light and perception.

DE/CALIBRATION TARGET

&& DCT REPO

DCT GENEALOGIES

◧⬒◨◧⬓

HOW NOT

TO BE READ.PDF

▣⬓⬒◨◧

DCT ENCRYPTION

STATION

◨◧◧⬓◨

JPEG

▣▣▣

IM/POSSIBLE IMAGES

◧⬒◨⬓◧

THE i.R.D.

DE/CALIBRATION

ARMY

◧⬒◨⬓◧

SHREDDED HOLOGRAM ROSE

◨◧◧⬓◨

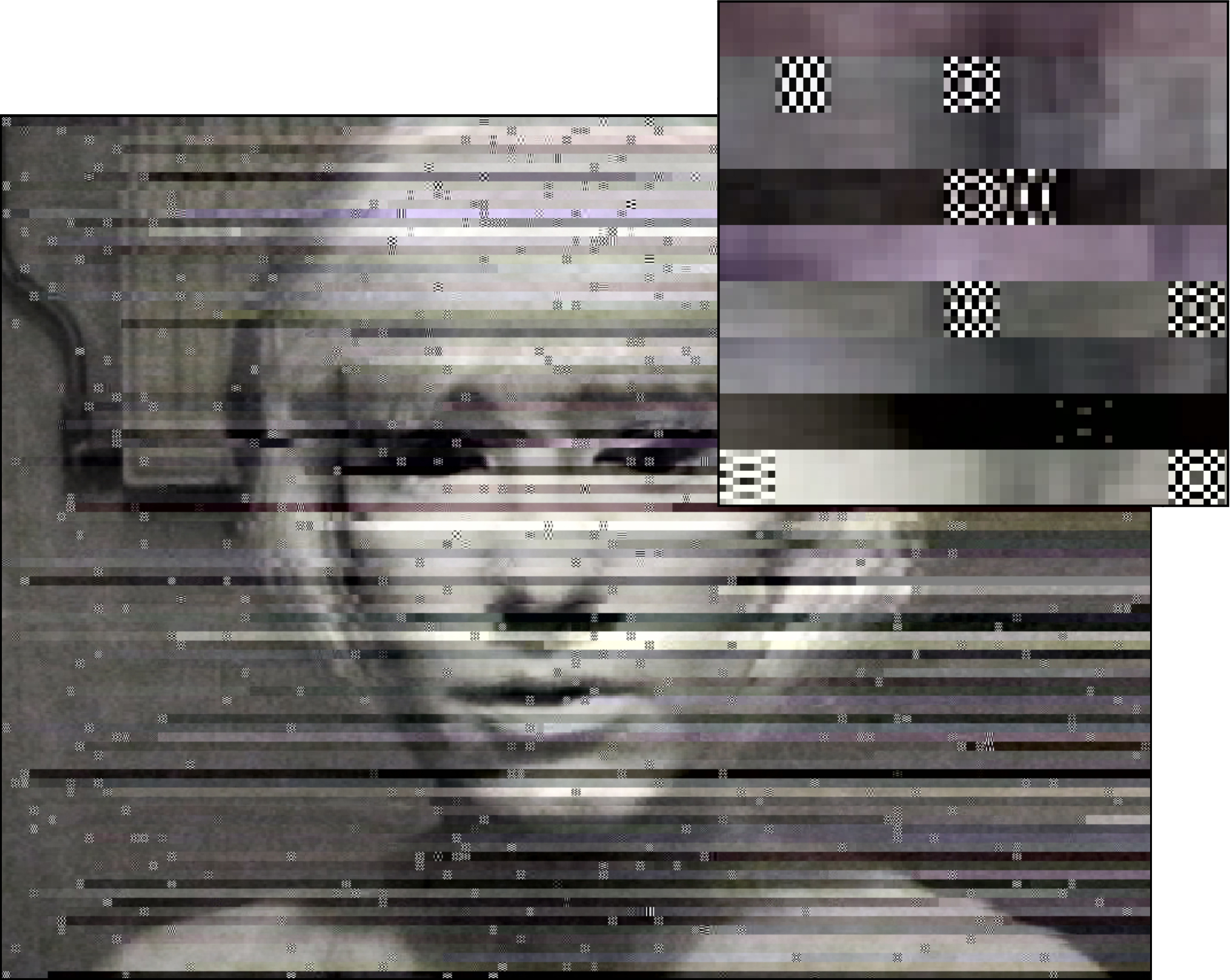

THE i.R.D. 365 PERFECT DE/CALIBRATION ARMY

365 Perfect is an app for the mobile phone that describes itself as:

“the best FREE virtual make-up app, period.

It’s like having a glam squad in your pocket”

The free app offers virtual photo make-up, including filters such as ‘delete blemishes’ and ‘brighten or soften skin’.

It can also deepen the smile, add lipstick or lip tatoos, enlarge the eyes and make the face slimmer,

lift the cheeks, enhance the nose, or resize the lips.

Change the colour of your irises.

whiten the teeth, lengthen your lashes, and even groom your brows.

I used the app on the most wide spread and even famous"white shadows"

- the caucasian ladies that were used as colour test cards for image processing -

not once, not twice, but hundreds of times over and over ...

... so much perfection ...

With every tuning of the face, the portraits would turn just one shade lighter, slimmer or smoother...

until the newly beautified faces would move from exaggeration to

showcasing the absurdity of beautifying standards.

︎ more information